In this episode we are going to find a sensitive data leak with unique tool I made - LeakLooker X. I added new features to detect leaks from Github repositories, anonymous FTP, 2x methods for Amazon S3 buckets and way to scan for API keys in HTML source code.

So let's find some sensitive information.

Last episode with cool interactive visualizations about offshore organizations of Polish Steampship Company is accessible below.

To subscribe drop your email at the bottom of the website or sign up here

https://www.offensiveosint.io/signup/

You will get access to all articles, early access to the newest ones and more with the time.

OSINT & Leaks

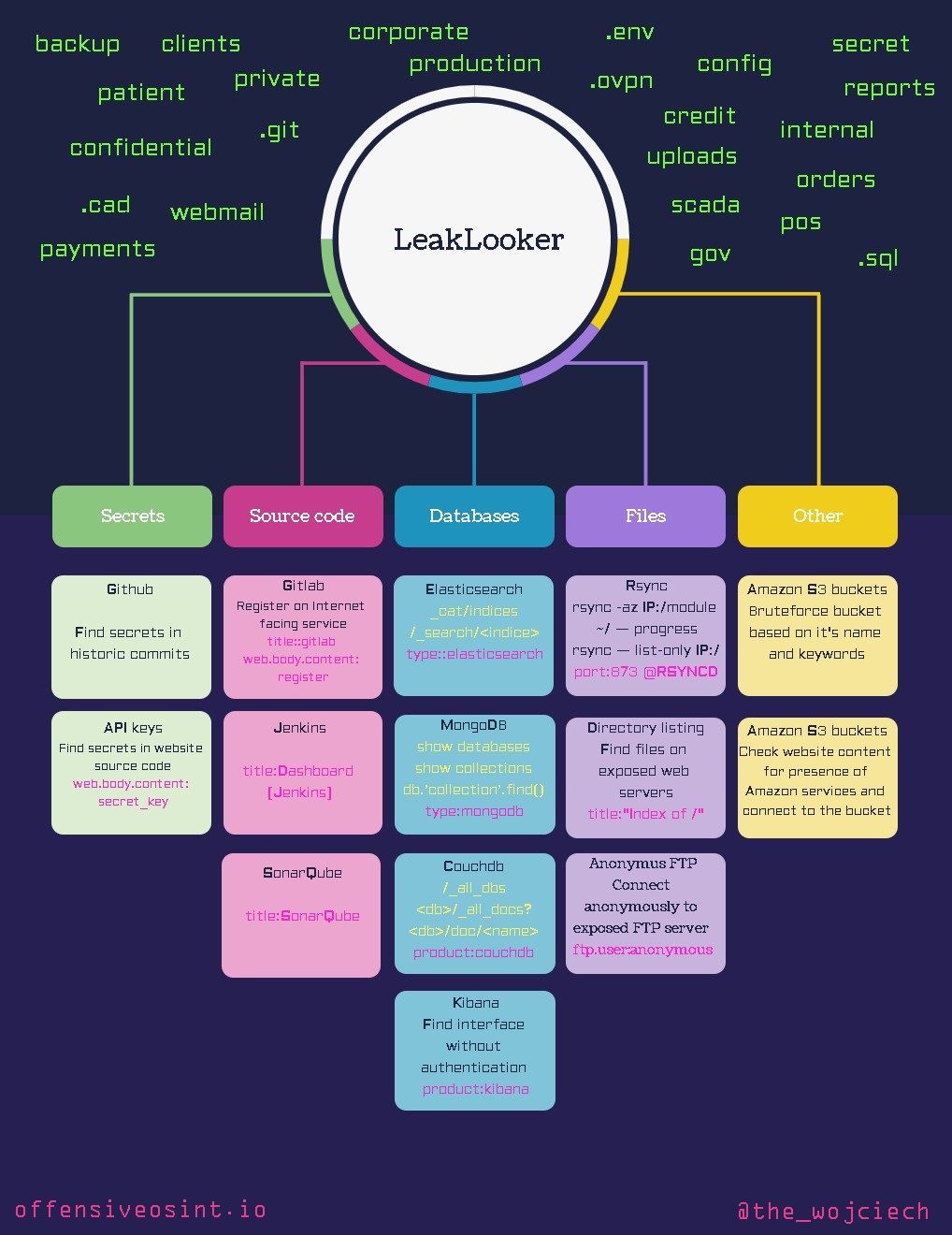

You can read about new leak almost every week - personal data, government/private companies documents or source code are one of the most common types of information that goes public by accident. However, I haven't seen any research or write-up how to track this incidents. I feel like it's an open secret and no one wants to reveal his methods and keep most of the things in private circles. I already wrote couple articles about leak hunting and this time we go further and I'm presenting a mind map that will make this hunt a walk in the park.

I distinguish three ways to find a data leak:

- Beginner/Feeling lucky

It's tough to find private data laying somewhere around but it is time consuming and sometimes depends on luck. You have to be in right place in right time.

This includes beginner researchers that just browse Binaryedge and don't actually know what they are doing or how to access exposed databases.

It has many disadvantages and it's the most time consuming way.

- Little bit of creativity and automation

Let's say you have technical capabilities to write simple script and to keep all the previous results to check only for new ones later. You need to specify potential keywords and write different scripts for each type - elasticsearch, mongodb, etc. It's not a hard task and can be accomplished with following code

import requests

import json

import math

headers = {'X-Key': ''}

keyword = "orders"

page = 1

results = True

min_size = 5000000000

while results:

end = 'https://api.binaryedge.io/v2/query/search?query=' + "type:%22elasticsearch%22" + keyword + "&page=" + str(page)

req = requests.get(end, headers=headers)

req_json = json.loads(req.content)

page = page + 1

pages = math.ceil(req_json['total'] / req_json['pagesize'])

if req_json['page'] == pages:

results = False

with open("elastic.txt","r+") as f:

old = f.read().splitlines()

for i in req_json['events']:

if i['target']['ip'].rstrip() not in old:

indices = {}

size = 0

try:

for j in i['result']['data']['indices']:

indices[j['index_name']] = str(j['docs'])

size = size + j['size_in_bytes']

if size > min_size:

print("http://"+i['target']['ip']+ ":"+ str(i['target']['port']) + "/_cat/indices")

print(i['target']['ip'], file=f)

for i in indices:

print(i + " " + indices[i])

except:

pass

First, we initialize Binaryedge API key, keywords and minimal size of the database (we don't want to list small or empty dbs). I set a size to around 5 GB, which is enough to find many documents, and word 'orders' was used as a keyword. It's simple one, I used it last time and many PII was discovered that belongs to Anti-Fraud Detection company.

Next, we iterate over the result with query "type:'elasticsearch'", check for amount of results and calculate pages.

To save IP addresses, we have to open new file and write every IP there. Then if IP has not been checked before, it will count total size of database and if it's greater than 5 GB, will print full URL to database, indices and corresponding number of documents.

And that's it, during some tests I already found addresses, orders, phone numbers etc. You can run it every day or every week and only new databases will be displayed.

- LeakLooker X

The whole idea and LeakLooker concept has been in my mind for long time and finally couple months ago I wrote a GUI for old console version. You can read about it here

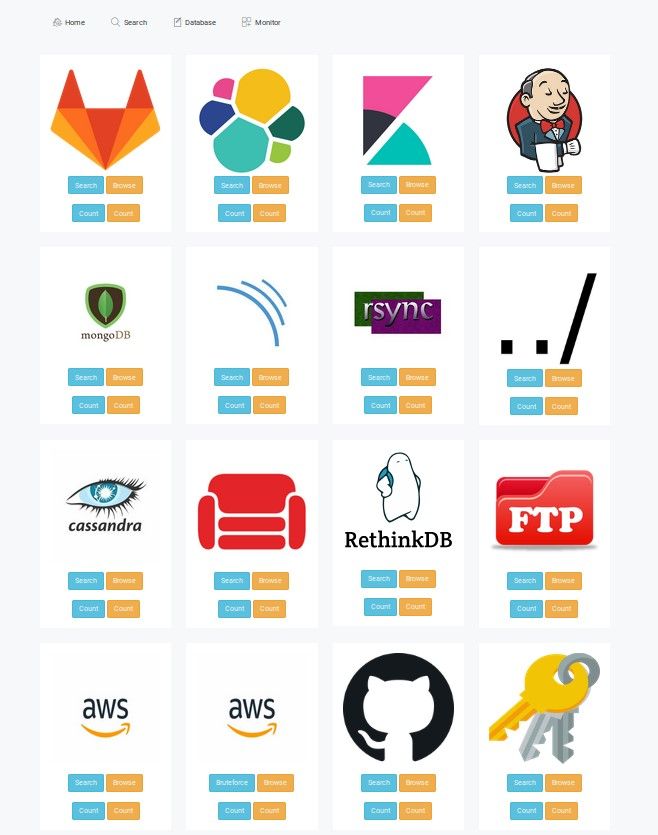

Basically, as title states, you can discover, browse and monitor database and source code leaks on every Internet facing system thanks to data from Binaryedge.io. So far, it supported 11 types of services but this update will provide 5 more:

- Anonymous access to FTP servers

They are mostly unsecured NAS storages that belong to private individuals and you will find many personal documents. Agreements, photos, notes or invoices. With proper keywords we can narrow search to corporate hunt, you can use for example "resume", "corp", "production" etc. There are also a lot of source code exposed with hardcoded tokens and password. In terms of non-sensitive files, you can find movies, cracked software or books.

- Amazon S3 buckets

I implemented two ways to detect Amazon buckets with public access. First of them is clasic bruteforce method, it's simple but very effective if you know proper keywords. To make it more effective, LeakLooker uses additional wordlist for suffixes. I can provide more complex permutations on demand. The wordlist has been stolen from - Sandcastle. If you remember my old story about Australian government leak you should know that this bucket had only one string as a name.

Second way was already presented in previous article about bucket takeover based on expired/non existent name. This module looks for strings associated with AWS regions - "s3-us-west-2.amazonaws.com" for North America, "s3-eu-west-1.amazonaws.com" for Europe and "s3.ap-southeast-1.amazonaws.com" for South East Asia. I tested all the possible regions and those three give the most results.

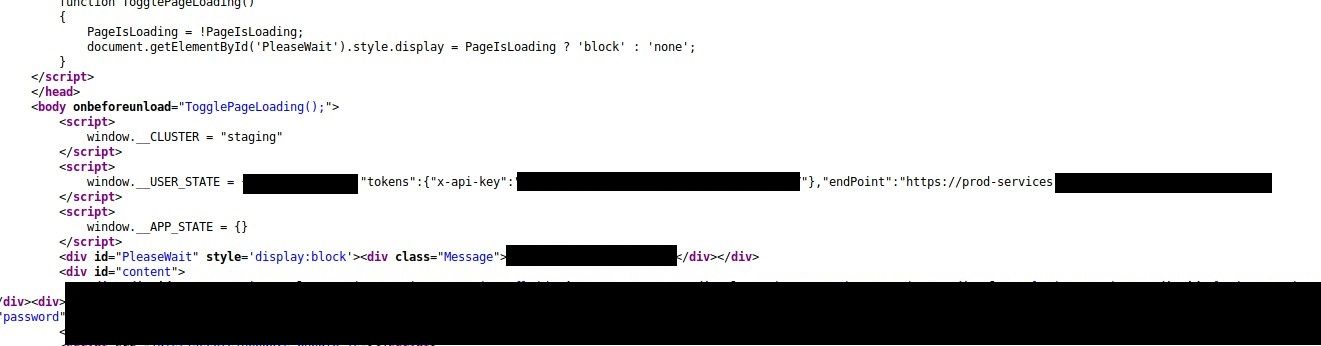

- API keys

In general, authorization tokens or api keys exposure are nothing unusual but it does not happen often in HTML source code. However, I implemented it for so called "OSINT privilege esalation". This plugin looks for variable names of API keys for Stripe, Google and generic words like "secret_key" and "api_key". I'm aware that some of the websites should be public and there is nothing wrong in exposing some of the keys to the world but it also shows, especially in exposed developer environments, that potential more keys can be revealed. We search for common word "secret_key", then look around for exposed api paths, in included js files, and gain access to internal/developers API.

- Github

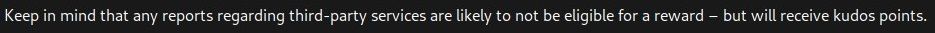

This part is based on a specially customized brilliant tool TruffleHog. It checks for every commit in every branch, then scan every line against regular expressions and entropy. TruffleHog is a console script so many people can't read properly and parse the results which makes this tool for them completely useless. For LeakLooker, it's enough when you paste link to the repository you want to scan and secrets, commits and paths will appear in a clear way, like any other results in LL. In addition, every finding is saved into database so you can manage it and analyze each commit separately.

That's being said, armored with the knowledge and tool we can finally go for a leak hunt.

Looting

I already presented ways to move around exposed databases and services in my previous articles

so now I will specifically focus on the new update and how to operate LeakLooker.

FTP servers expose their files by setting at least read permission to anonymous users. There are still plenty of encrypted and ransomed storage units but I don't think this campaign has been profitable. You can scan based on country, network or keyword and Binaryedge query for anonymous FTP is "ftp.user:anonymous". I'm not going to attach any findings, but try LL with FTP module for couple minutes with proper keywords and you will know what i'm talking about.

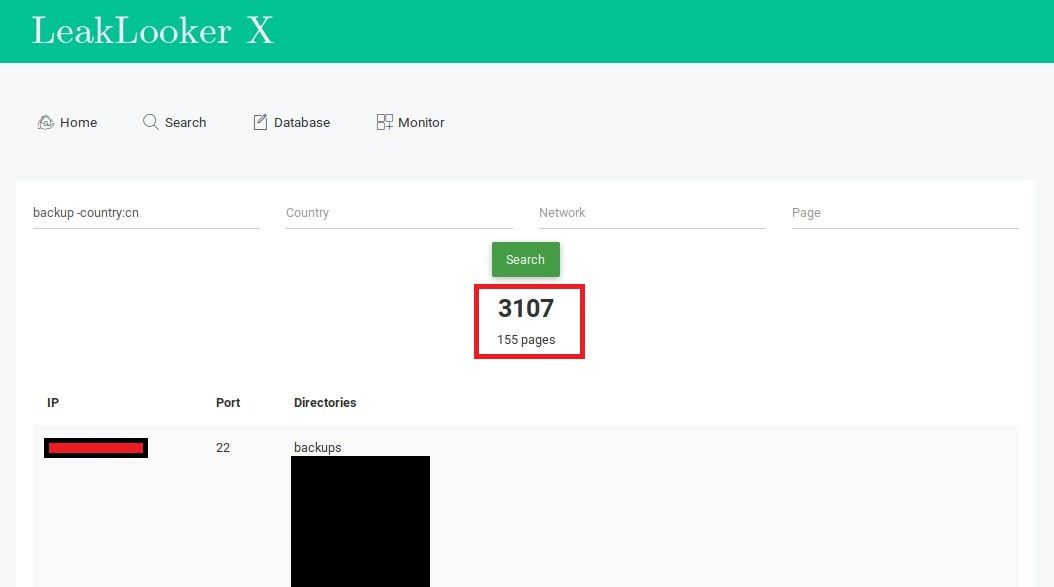

First step is to search for and measure potential amount of results for given parameters.

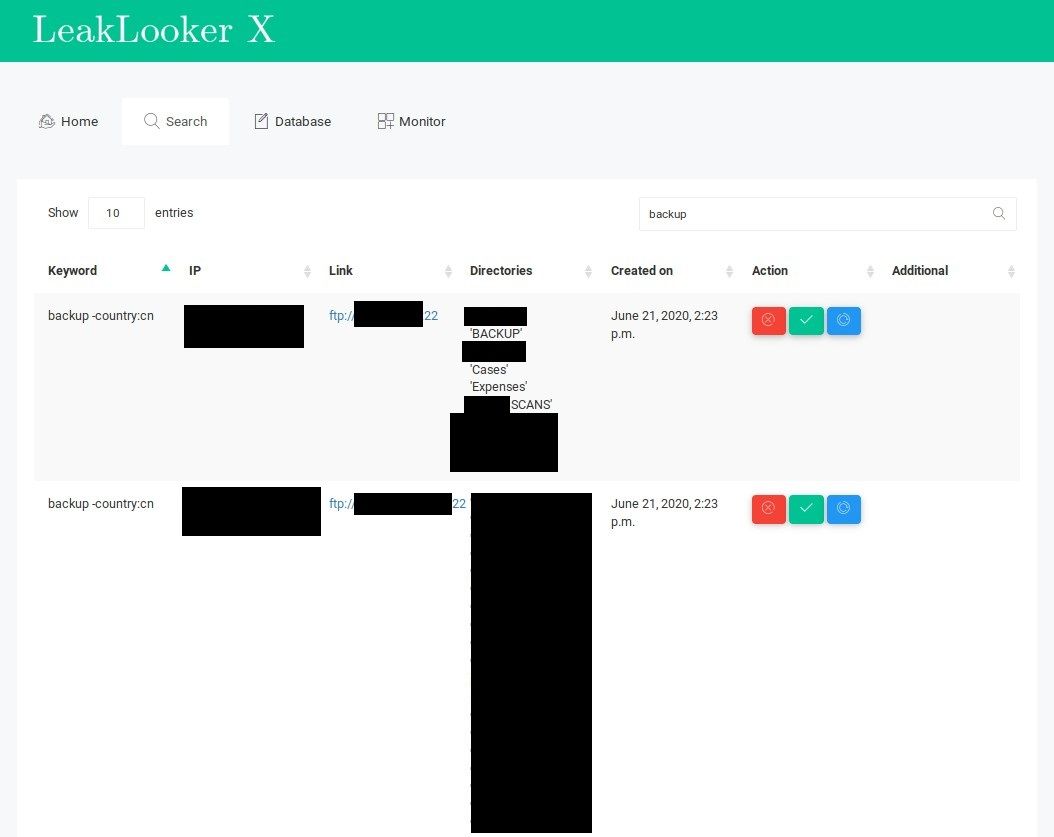

If you decided to download all results, you should check every page and move forward to "browse" tab for particular type.

Where you have all the information you have collected in "search" functionality. It's easy to manage all of the findings, you can confirm them, delete and put on blacklist or mark them for later review.

Knowing what kind of files you can came across unsecured FTP servers, you should precise your keywords depending of your end goal. For example to look for backups you can use extension ".bak" or just backup combined with "production". Binaryedge lists all the files in FTP, not only these in main folder, which means you should search for any sensitive extension possible - ".eml", ".dat", ".kdbx" etc. It's similar situation as with directory listening.

I want to make the platform bigger and more versatile with additional sources or direct checks that's why I implemented S3 bucket bruteforcing. It makes direct request to s3.amazonaws.com with your keyword. To find interesting leak you have two possibility:

- Search for common, popular brands and companies with big wordlist (which is attached to LL) and permutations. It includes possible abbreviations of company or their projects.

- Combine two wordlists with random bucket names and prefixes and just start to bruteforce buckets. It makes hard to find owner in some cases but contains sensitive files as well.

Except standard bruteforcing, I implemented old feature, for detecting S3 buckets, known from console version.

In my opinion, the most interesting part of new update is possibility to detect secrets from page HTML source code and Github repositories. Because it's not only about finding per se but also about mentioned earlier OSINT privilege escalation. You have to find the key, review code and find another endpoints, often in highly obfuscated javascript file.

Most of the headlines go to leaks related to personal data but leaking secrets can also have bad ending for company. Most famous example is of course Uber hack, where attacker found exposed API key in Github and had easier way exfiltrate data.

I took a deeper look into public Github repositories of companies that participate in bug bounty program. I didn't report and won't be reporting anything to non-reward bounty programs or ones that have out of scope 3rd parties and I encourage you also to do so. I was a step from reporting some issues but I have a self respect so it waits to be found by someone else, not necessarily with good intentions.

There are enormous amount of exposed private data - keys, secrets but there is no reward for these findings except selling it illegally, though. Affected corporations do not care about researchers, (often not even replying) so it's not worth the effort to make it secure (unless you found your own info). I only hunt and report findings with high intelligence value like government vpn configuration

Another government #dataleak from Southeast Asia. This time open VPN configuration and private key has leaked. Key belongs to the Ministry of Economy and Finance in Cambodia and was also used in development environment. Cambodia CERT promptly restricted access to the server. pic.twitter.com/cB9BU9Eu1o

— Wojciech (@the_wojciech) May 26, 2020

or exposed Command & Control server of Chinese(?) APT that leaked credentials for major government institutions in South East Asia.

It looks like webmails of Ministry of Foreign Affairs of the Republic of Indonesia @kemlu_ri Royal Malaysia Police and Malaysian Anti-Corruption Commission @sprmmalaysia have been compromised. #leaks #threatintel #infosec #breach #ThreatHunting pic.twitter.com/PcR9bmAO9f

— Wojciech (@the_wojciech) May 10, 2020

but let's back to the Github case and what loot you can collect from public repositories.

Both are findings from LeakLooker with directory listing module.

700 euro bounty for finding exposed internal project

I had to test new Github module so I checked a large part of repositories of companies from below list (sort by bounty!)

The plugin is very effective and has a really clear output so scanning each repository does not take long and reviewing the results is very simple.

Using this solution, on 16th of June, I found exposed strange base64 encoding string based on the implemented entropy check.

Below is just an example screen of Yelp repository (not related to this story), I couldn't post the real one anyway.

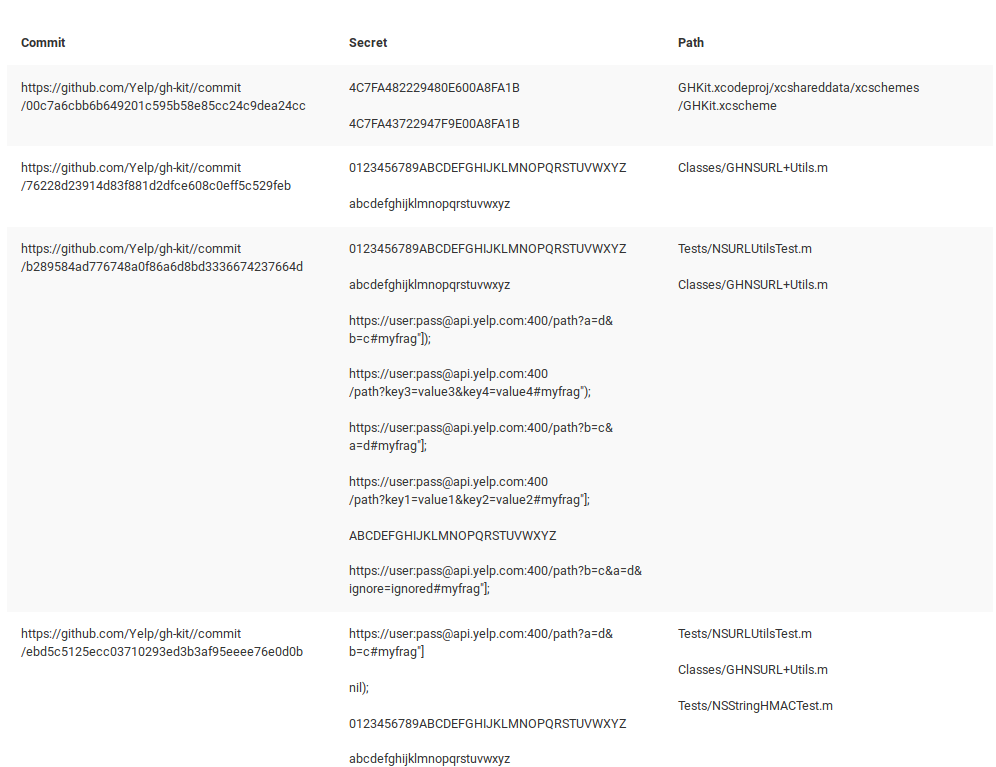

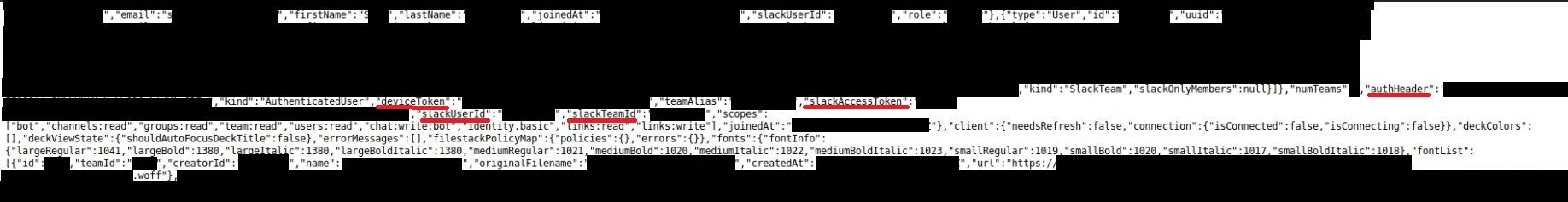

After quick investigation, it turns out it is authentication Slack token embedeed in large JSON in some test HTML file.

And after deeper investigation, this same large json file includes around 600 corporate emails, names, lastnames, slackUserIds, roles, types and more.

I got a really quick response - 10 hours and report was triaged, day after repository has been set up as private and issue resolved.

For the record, this project was there for ~5 months.

I submitted another reports on Bugcrowd

and Hackerone

that might or might not be related to this research. I can't say more but the secrets are out there.

In addition, I'm looking for any type of cooperation - freelance or full-time. Let me know if you can help.

To subscribe drop your email at the bottom of the website or sign up here

https://www.offensiveosint.io/signup/

Access to the tool on demand, dm me.

Conclusion

LeakLooker is a tool for is for everyone but it's the most useful for corporate espionage, OSINT investigations and for bug bounty hunter or cybercriminals. The line between last two depends upon decision what he does with the found data at the end, LeakLooker gives you inside view of almost any possible data you can imagine, I found staff that I didn't even think exists. Even if company is not "vulnerable" then 3rd parties or private people can leak their data. When you research any company, try the tool and you might find a breakthrough.