In today's episode we discuss missing 411 cases, selfies with tanks and where are the best places to run in your city.

This if the first part of Open Source Surveillance research that focuses only on social media aspect of location based investigation.

Read tutorial to get familiar with the tool

TL;DR I will show how to write new module for the Platform, reveal new updates - Strava, VKontakte and SportsTracker, also present research how to gather intelligence based on social media from the OSS.

Disclaimer

Originally, article was published on 16th of April, exclusively for Patreon subscribers, and presents outdated screenshots and interface. Since then, the Platform has been improved significantly in terms of stability, features and user experience. It also supports clustering, sidebars instead of info windows and multi selection.

You can get a small preview how it looks now from my tweets

Gather geo intel in a blink of an eye with new Open Source Surveillance update. It brings new modules, improved interface and enhanced performance to collect data even more efficiently. Now it's easier than ever to detect, track, and monitor potential threats in real-time. #OSINT pic.twitter.com/WekVAgx9GZ

— Offensive OSINT (@the_wojciech) March 30, 2023

REGISTER

Introduction

I don't need to convince anyone that Social Media Intelligence (SOCMINT) is one the most popular OSINT field and broadly discussed in community. Basically, in many professions person needs to find and research social media profiles of individuals - investigative journalists, LE officers, lawyers, there are many more jobs that require searching for leads in people online footprint.

Beside obvious information, like where individual works, his friends, photos or hobby, sometimes we can obtain his location, based on the geotags or hints shown on photos. In Open Source Surveillance it's not about who, but rather what and where, and by that I mean it does not focus on particular person but on the territory where photos were taken.

It's possible to take an inside look on the ongoing live events, get help with geolocating pictures or look for people that were present during specific time range and place. It's not only about surveillance but also about finding witnesses or estimate crowd.

Currently, there are plenty of photos & videos sharing platforms like Youtube, Instagram, Twitter, Flickr but not all of them supports geotags, so these three have been implemented. Moreover, Snapchat is also included, but works differently based on map.snapchat.com, which I will talk about later.

It's one of the simplest check but hardest to maintain in terms of scalability, from various reasons. As previously stated, I want this tool to be plug & play, so no input from user is not required, including API keys. Just simply register and start your research out of the box.

To achieve that, I need to maintain accounts on Instagram and checking whether they are alive and can or cannot be used. It's case with majority of checks that some authentication in form of cookie, token or API key is mandatory to retrieve the data. But first let's take a look on the logic.

@shared_task(bind=False)

def instagram_module_geo_search(id, lat, lon, user):

user = User.objects.get(id=user)

coordinates = Coordinates(id=id, user=user)

results = []

url = "https://www.instagram.com/location_search/"

params = {"latitude": lat, "longitude": lon, "__a": 1}

headers = {"Cookie": instagram_cookie}

try:

r = requests.get(url, params=params, headers=headers, proxies=proxies)

r_json = json.loads(r.text)

except Exception as e:

return {'current': 100, 'total': 100, 'percent': 100, 'error': "Connection error, please try again", "results": [],

"type": "instagram"}

if 'venues' in r_json:

for counter, location in enumerate(r_json['venues']):

try:

external_id = location['external_id']

current_task.update_state(state='PROGRESS',

meta={'current': counter, 'total': len(r_json['venues']),

'percent': int((float(counter) / len(r_json['venues'])) * 100)})

check_duplicates = InstagramPlace.objects.filter(external_id=external_id, search_main=coordinates).exists()

if not check_duplicates:

name = location['name']

lat = location['lat']

lon = location['lng']

address = location['address']

place_db = InstagramPlace(search_main=coordinates, name=name, external_id=external_id,

lat=lat, lon=lon, address=address, timestamp=now)

place_db.save()

results.append(dict(id=place_db.id,name=name, external_id=external_id,

lat=lat, lon=lon, address=address))

except Exception as e:

pass

return {'current': 100, 'total': 100, 'percent': 100, "results": results, "type": "instagram"}

else:

return {'current': 100, 'total': 100, 'percent': 100, "results": [], "type": "instagram"}

Above simplified code is responsible for checking Instagram places near given latitude and longitude.

Endpoint https://www.instagram.com/location_search/ with longitude, longitude and __a equals 1 must be present for search be successful. Especially last one is important, it gives an easily to parse JSON. This is example request to search by coordinates https://www.instagram.com/location_search/location_search/?latitude=LAT&longitude=LON&__a=1

First try-except clause checks if HTTP request was valid and loaded as a json, if not most probably it is connection error - proxy, account has been banned or downtime on Meta site.

But, if results were valid and response contains 'venues' key, we can move forward and extract the data. In the meantime, with every iteration we update current task which illustrates progress bar on the frontend.

At the end, save it to database and append to the results to present it to the end user.

That basically everything and checks are very similar to each other with difference in authentication or data format.

Implementing new social media

To get more familiar about each module and how it operates, we are going to implement completely new one from scratch and look for interesting artefacts with it - VKontakte is a Russian social media website with slightly above 60 millions monthly visits.

First, things first, we need to do some research in terms of access, API and how the response looks like. It turns out that vk has an easy to use API and to obtain the key, only registering the app is needed.

Also it says

There can be maximum 3 requests to API methods per second from a client.

So we need couple more keys to make it run smoothly. However,

Except the frequency limits there are quantitative limits on calling the methods of the same type. By obvious reasons we don't provide the exact limits info.

We have to take into account that profile can be banned and key will be invalid.

The endpoint that we will use looks as follow

https://api.vk.com/method/photos.search?access_token=[ACCESS_TOKEN]&v=5.131&lat=[LATITUDE]&long=[LONGITUDE]&radius=15{

"response": {

"count": 2,

"items": [

{

"album_id": -7,

"date": 1409512233,

"id": 338288524,

"owner_id": 211698121,

"lat": 47.017895,

"long": 28.811475,

"sizes": [

{

"height": 130,

"type": "m",

"width": 97,

"url": "https://sun9-16.userapi.com/impf/c622620/v622620121/4d3/ODkFtubgUHY.jpg?size=97x130&quality=96&sign=86479864e1f15b00e4485235fa38b2ec&c_uniq_tag=5ZemMdAG_zP7JVr08hFTUr0RCYAwuWC4YuA4c4hKdsM&type=album"

},

],

"text": "",

"has_tags": false

},

]

}

}Most important fields are date, id, owner_id, lat, lon, text and image_url what translates to below model

class Vkontakte(RandomIDModel):

search_main = models.ForeignKey(Coordinates, on_delete=models.CASCADE)

lon = models.CharField(max_length=100)

lat = models.CharField(max_length=100)

text = models.CharField(max_length=1000)

photo_id = models.CharField(max_length=100)

owner_id = models.CharField(max_length=100)

timestamp = models.DateTimeField(max_length=100)

image_url = models.CharField(max_length=10000)where search_main is the Foreign key to the current coordinates searched by user.

After initial research we can start writing some code.

<i class="fa fa-vk" title="VKontakte"></i>

On dropdown list, specific ID is assigned to scan

<a class="dropdown-item d-flex" href="javascript:void(0);" id="vkontakte_geo_search"><span class="align-self-center">Scan</span></a>

which leads to following jQuery call

$('#vkontakte_geo_search').on('click', function() {

snackbar("Searching for VKontakte")

coordinates = document.getElementById("coordinates").innerHTML

lat = coordinates.split(',')[0].slice(1, -1)

lon = coordinates.split(',')[1].slice(1, -1)

$.ajax({

url: "../vkontakte/geo/scan/" + search_id + "/" + +lat + "/" + lon,

type: "get",

xhrFields: {

withCredentials: true

},

success: function(response) {

if (response.task_id != null) {

get_task_info(response.task_id, pgrbar);

}

}

})

});

Coordinates are latitude and longitude from marker on the map. It makes an ajax query to vkontakte/geo/scan/[SEARCH_ID]/[LATITUDE]/[LONGITUDE] where search_id is current ID of the map.

We also need to add same url to urls.py in Django project

path('vkontakte/geo/scan/<id>/<lat>/<lon>', views.vkontakte_geo_search, name='vkontakte_geo_search'),

And now we have to configure view

@login_required()

def vkontakte_geo_search(request, id, lat, lon):

if request.headers.get('x-requested-with') == 'XMLHttpRequest' and request.method == 'GET':

vkontakte_geo_task = vk_module.vk_geo_search.delay(id, lat, lon, request.user.id)

return HttpResponse(json.dumps({'task_id': vkontakte_geo_task.id}), content_type='application/json')

else:

return HttpResponse(json.dumps({'task_id': None}), content_type='application/json')

In this view, we just invoke vk_geo_search task from vk_module, and the function that does actual task work is presented below

@shared_task(bind=False)

def vk_geo_search(id, lat, lon, user):

user = User.objects.get(id=user)

coordinates = Coordinates(id=id, user=user)

results = []

url = f"https://api.vk.com/method/photos.search?access_token={token}&v=5.131&lat={lat}&long={lon}&radius=5000"

try:

r = requests.get(url, proxies=proxies)

r_json = json.loads(r.text)

except Exception as e:

return {'current': 100, 'total': 100, 'percent': 100, 'error': "Connection error, please try again",

"results": [],

"type": "vk"}

for counter, item in enumerate(r_json['response']['items']):

try:

photo_id = str(item['id'])

current_task.update_state(state='PROGRESS',

meta={'current': counter, 'total': len(r_json['response']['items']),

'percent': int((float(counter) / len(r_json['response']['items'])) * 100)})

check_duplicates = Vkontakte.objects.filter(photo_id=photo_id, search_main=coordinates).exists()

if not check_duplicates:

print(photo_id)

text = item['text']

lat = item['lat']

lon = item['long']

date = item['date']

timestamp = datetime.fromtimestamp(date)

owner_id = str(item['owner_id'])

photo_url = item['sizes'][4]['url']

vkontakte_db = Vkontakte(search_main=coordinates, text=text, owner_id=owner_id,

lat=lat, lon=lon, image_url=photo_url, timestamp=timestamp,photo_id=photo_id)

vkontakte_db.save()

results.append(dict(id=vkontakte_db.id, pk=coordinates.id, text=text, owner_id=owner_id,

lat=lat, lon=lon, photo_url=photo_url, timestamp=timestamp, photo_id=photo_id))

except Exception as e:

pass

return {'current': 100, 'total': 100, 'percent': 100, "results": results, "type": "vk"}First, we need to get current user, his ID and ID of the search. Error is being thrown when connection fails, it might be fault of an API key, proxy, rate limitation or timeout on app side.

If there are matches, it iterates over the results and saves it to the database and returns json at the end. In the meantime, it also updates progress bar with .update_state function.

As you remember, we make a request to vkontakte/geo/scan/[SEARCH_ID]/[LATITUDE]/[LONGITUDE], which returns task_id from celery queue and is needed for progress bar.

And last function only checks for status of the task, it updates the task bar when status is in progress and display data when task is finished.

Worth to mention that we add timestamp, image url and link to the details to the marker's infowindow.

To sum this up, the flow looks like this

- Add icon and event on click

- Add URL to the project

- Add new view that invokes task

- Add task to make request to VK and extract data

- Display it on map

Skeleton of the project is already done so it's super easy to implement new module like this one.

Also, it's very precise in terms of location. Each photo is located maximum couple meters from point where marker is placed.

We will take a look later how we can weaponize this information to gather intel about places and people but let's take a look on the rest of social media checks.

Flickr & Twitter & YouTube

The logic behind these checks is the same as with the previous one, and I'm still looking for any other social media platform that allows to search by place or coordinates.

Flickr is the easiest check from all of the introduced. It uses api and secret key for authentication and each has a limit of 3600 calls per hour, which is hard to achieve by current load, however there are still couple additional keys in database, which are being rotated with each request.

Youtube though, is the only check that requires two steps in order to extract all necessary data, what makes it the slowest from all. It is because API does not return coordinates in first response together with all results - that's why it's needed to make additional request for each found video to get exact location. By default, limit of videos is set to 50.

Use cases

And now we are moving to the most interesting part of the article, which is the research, i.e. what is the best way to look for photos/posts, what we can find and how to interpret the data.

Missing 411

This is the phenomena I've been tracking for years already, but never had chance to mention it in any way. Brilliant researcher David Paulides wrote couple books about this very mysterious and intriguing topic - a lot of people have disappeared in mysterious circumstances in national parks, rural areas and even some cities.

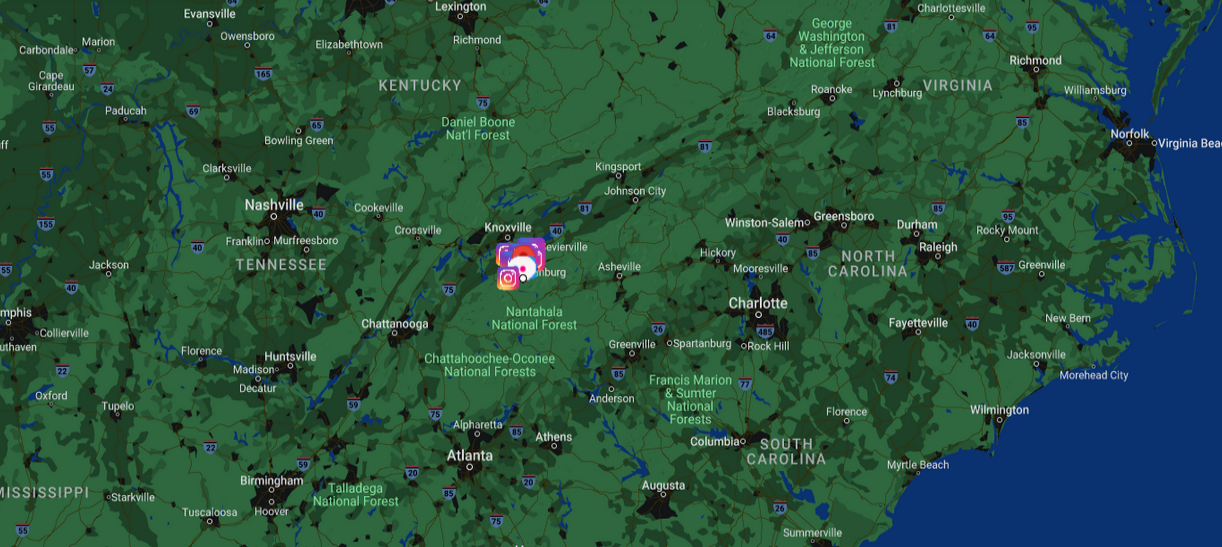

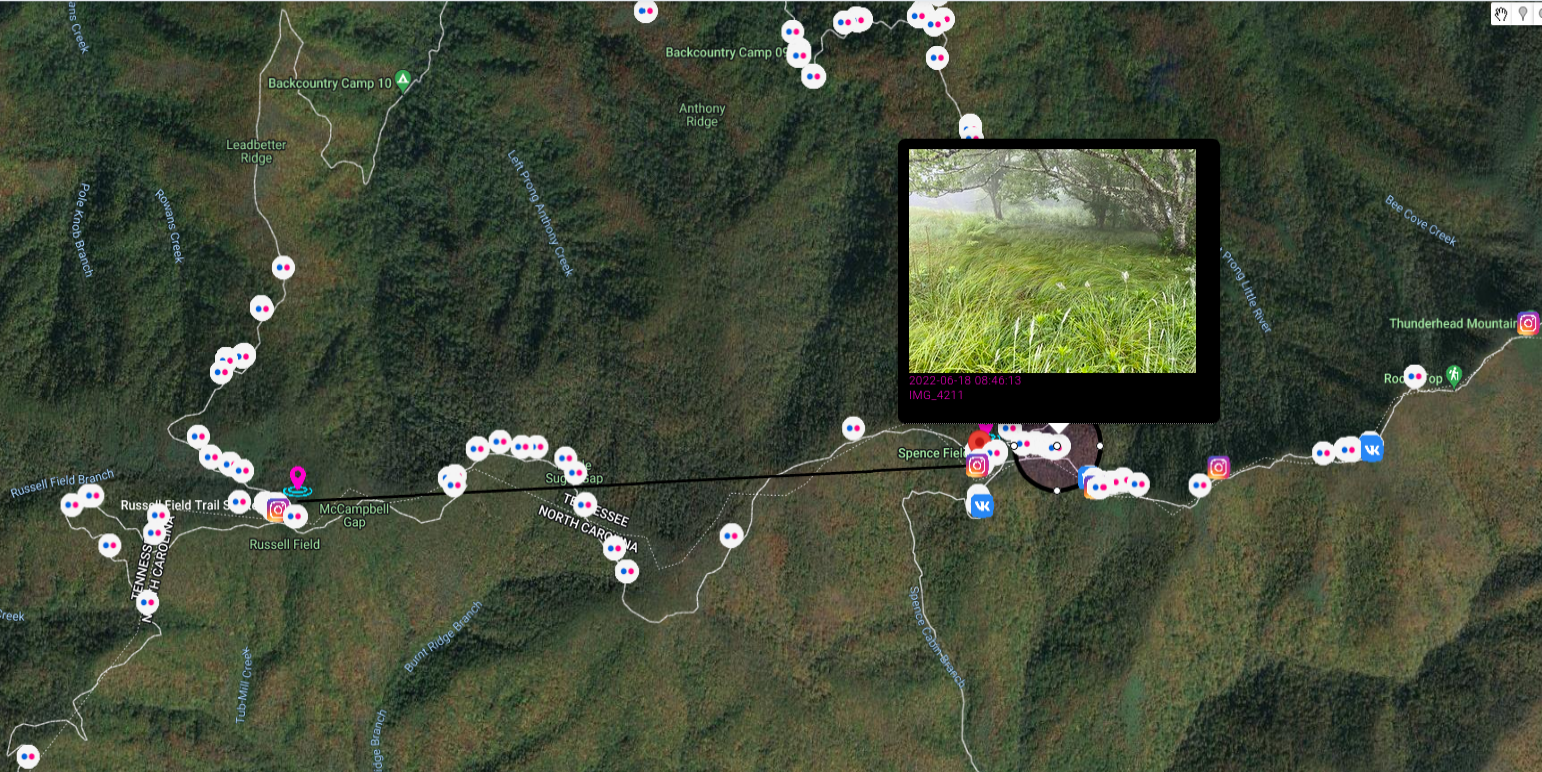

I want to describe the largest search in the history of Great Smoky Mountains National Park.

It's good time to remind about the draw feature on google maps. You can draw circles, lines or put additional markers. If you make a mistake, just click on undo button in top right corner and you last action will be deleted. Eraser icon clears map from every drawing.

Let's back to the case, it happened on 14.06.1969 near Spence Field in Great Smokey Mountains National Park. Dennis Lloyd Martin (6 years old) together with family were hiking from Russel Field Shelter, where they spent previous night, to Spence field.

Spence Field sits on the divide of Tennessee and North Carolina and is part of the Appalachian Trail. The field is grassy and runs in an east to west direction, with the north drainage going into Tennessee and south going into North Carolina.

This is quote from Mr. Paulides' book - "Missing 411- Eastern United States". As former law enforcement, he described everything very detailed and his books are must-read for everyone that is interested in missing persons cases.

It was a great atmosphere for the children to enjoy a national park setting. At one point, the boys decided to split up and play hide and seek. Dennis was last seen on the Tennessee side of the field, fifty feet from where Clyde and William were sitting. After three to five minutes of not seeing, Mr. Martin became concerned and began calling out loud for his boy, but there was no answer

I marked on the map track they hiked as well as Spence Field and draw a circle in place where Dennis was last seen, on the Tennessee side. Nice addition are photos from social media, which show trail they travel and place they stayed.

Paulides refers to article from July 21, 1969 in the Knoxville News Sentinel which states that around 4:30 PM and and 5:30 PM (3:30 was approx. time of disappearance), another family were visiting region five to seven miles from where Dennis Martin disappeared. This place is marked with another circle and it's known as Sea Branch Creek near Rowans Creek in Cades Cove. They heard "enormous, sickening scream" and mentioned about potential encounter "It wasn't a bear. It was a man hiding in some bushes. He was definitely trying lo hide from us.''

This same day at 8:30 PM, heavy rain start to fall and made impossible to continue search.

On next day, 16th July

NPS personnel searched all major drainages in the area of Spence Field. Thirty Boy Scouts on an outing were used as searchers along with fifty-one ranger students who were on a field trip in the area. Special approval was requested from Tony Stark, the regional chief of Visitor Protection to hire a helicopter to transport equipment and establish a base camp at Spence Field. This was approved. Various North Carolina rescue squads were contacted and started their response. Two Huey helicopters from the Air Force were requested and dispatched. Forty Special Forces (Green Berets) from the Third Army Headquarters in Fort Benning, Georgia, were requested and dispatched.

So, massive, massive search was conducted

The number of searchers swelled to almost eight hundred on June 20. On June 21, the searchers numbered 1400. The area around Spence field was saturated. Drainages were searched inch by inch. Armed Special Forces personnel were looking for tracks, broken twigs, anything leading toward evidence of where the boy may be.

To present you scale of the search, coast guard committed two boats to Fontana Lake, which is around 20 miles from Spence Field.

Unfortunately boy was never found. You can read about other bizarre circumstances around this case and many many other in David Paulides Missing 411 book series.

Events & infrastructure

Additional support on the ground can be provided by Open Source Surveillance with social media module to track ongoing events, security of buildings or situation on the street.

When providing access for testing purposes I was asking about use cases for the tool and was amazed what people can use the platform for. Many said about supporting activities on the ground like monitoring sports events or concerts, some responses included domestic violence tracking or taking care of physical security of facilities, precisely airports were mentioned.

To present you the idea, lets take one of the biggest airport in the world, which served to almost 90 millions passengers in 2018 - Dubai International Airport (DBX)

That's a lot of information to process and every couple minutes new data appears, especially related Snapchat, Twitter and Instagram. Operator of the tool might look for variety of findings, from passengers misbehaviour to keeping track of sensitive places in the airport. When something occurs, even more posts and photos will appear what gives additional pair of eyes on what's actually going on on ground.

Also, remember this is just one module out of many to keep an eye on. In next parts, wifi or unsecured devices will be added what makes monitoring even more interesting.

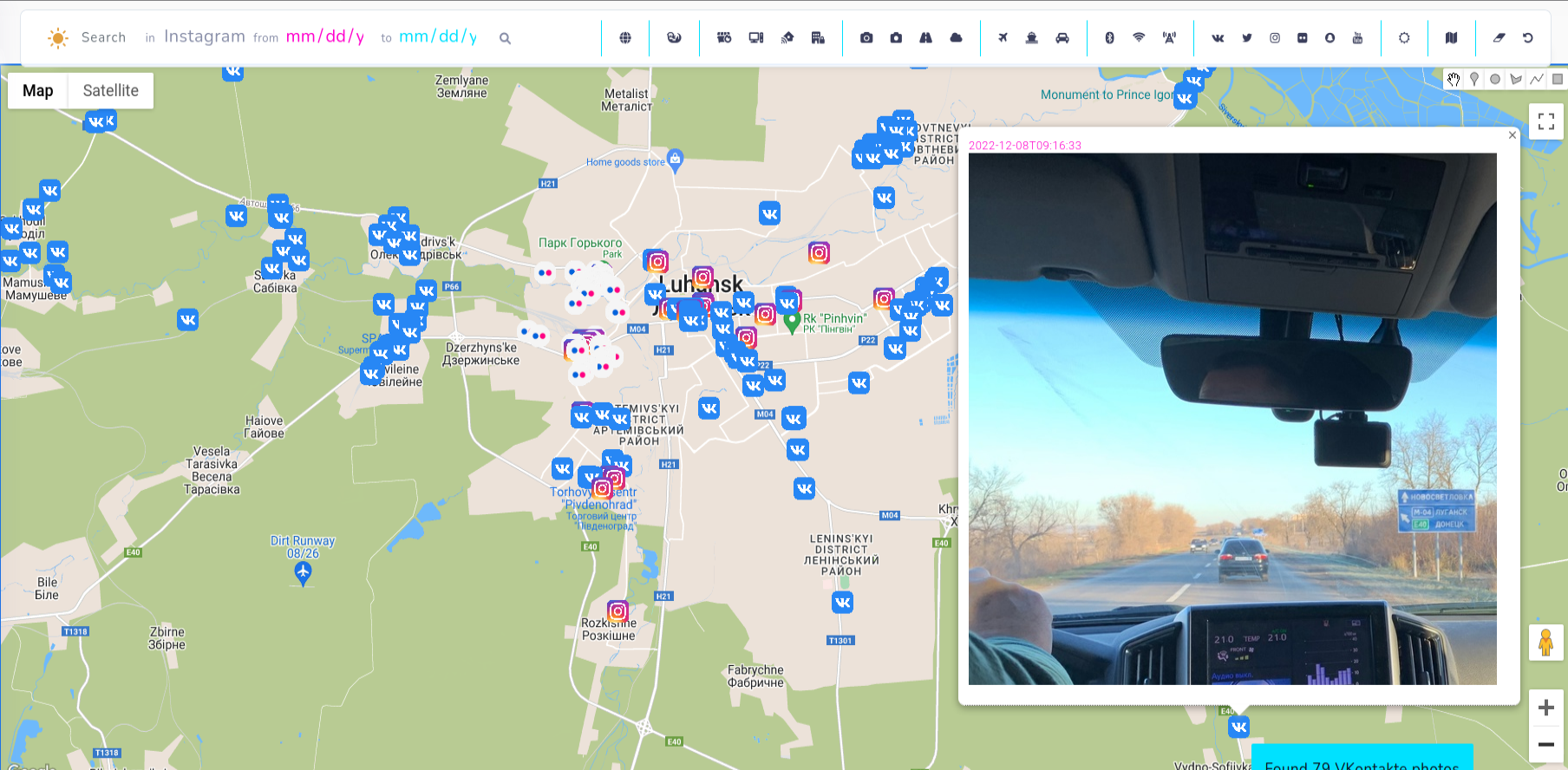

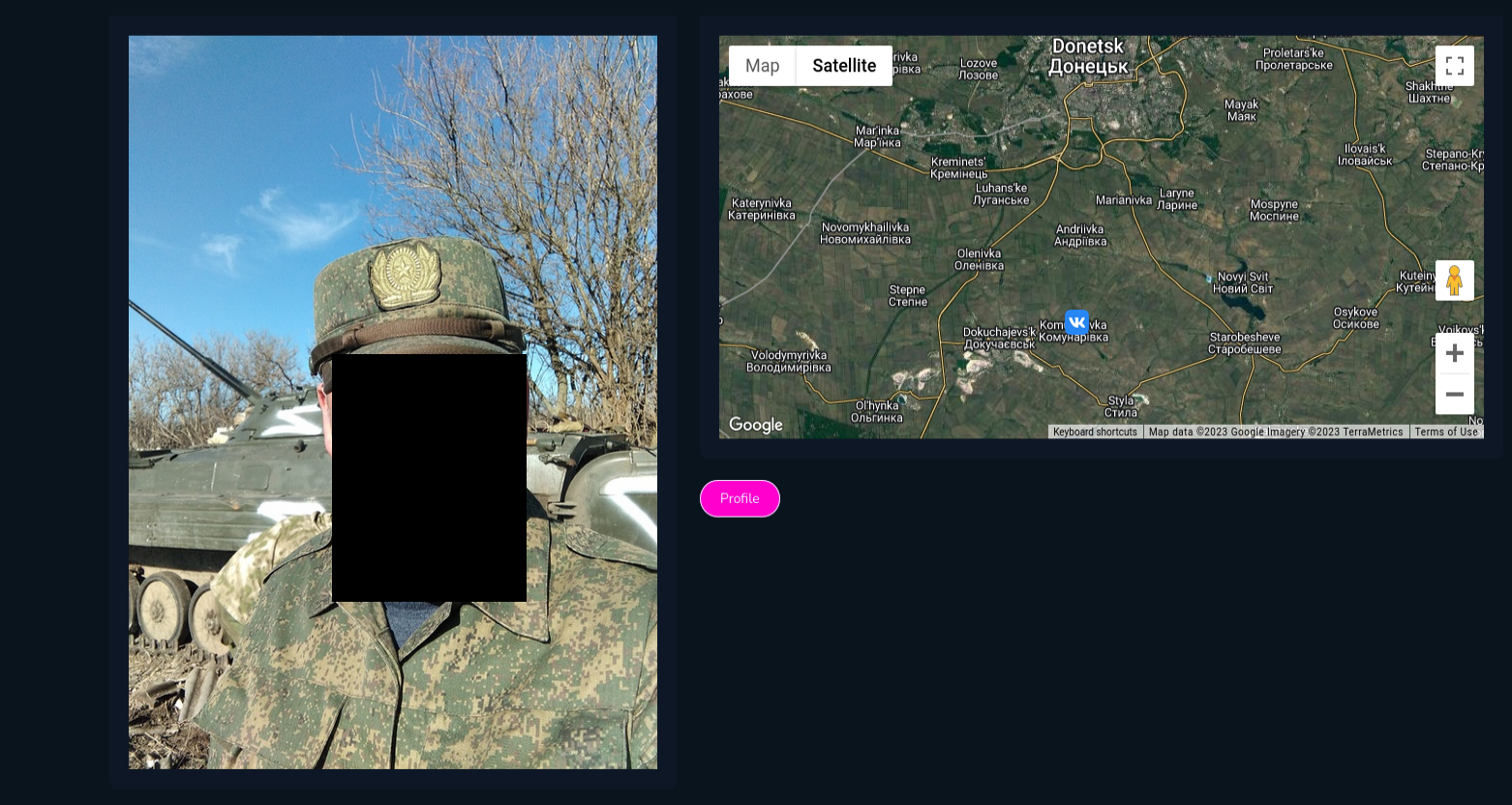

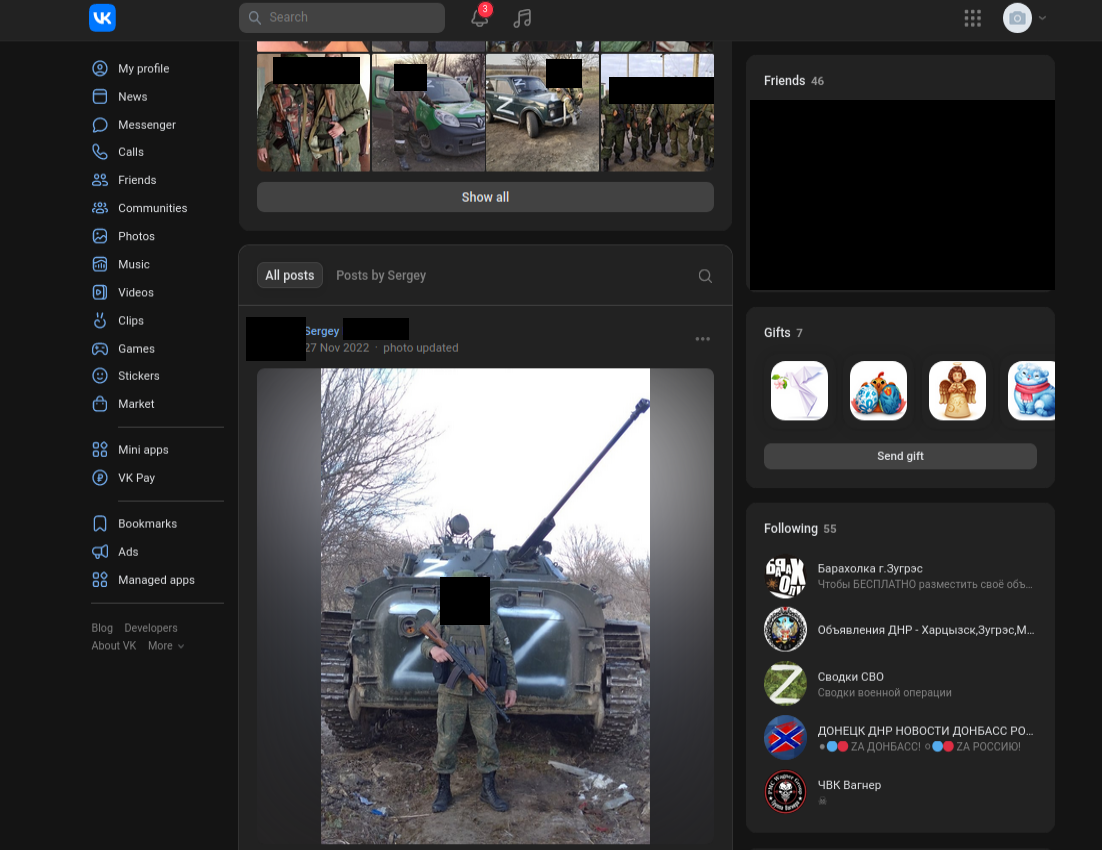

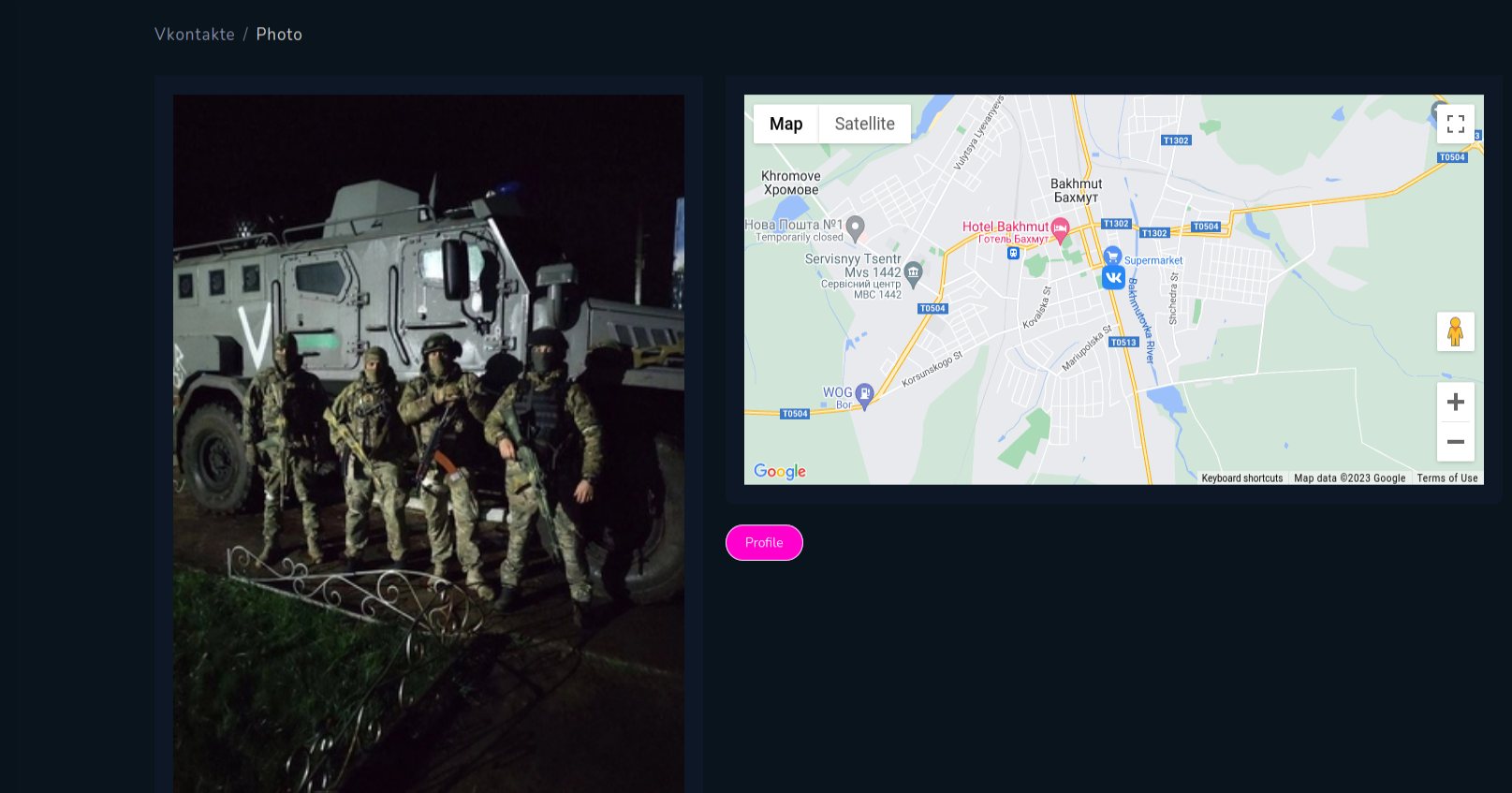

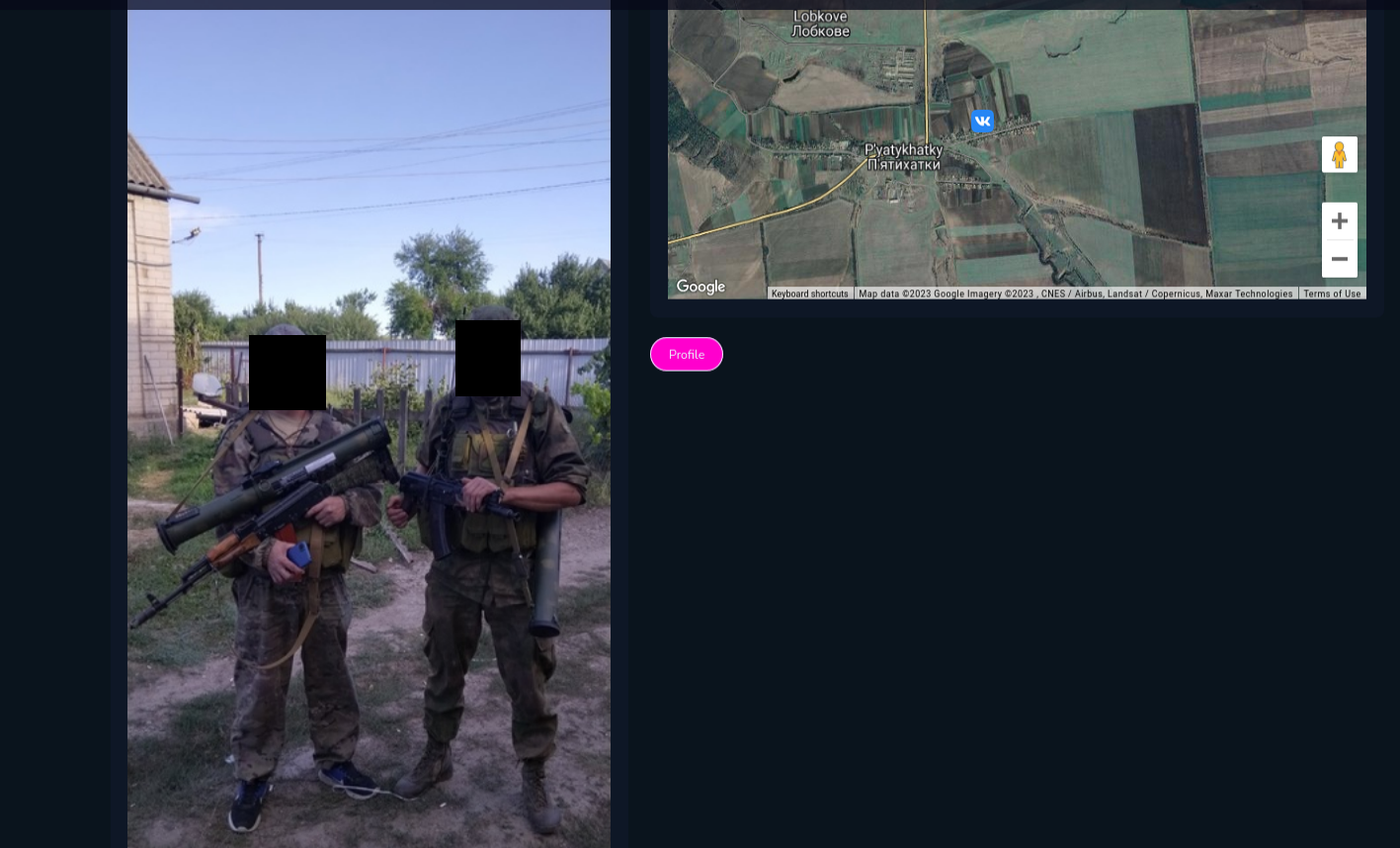

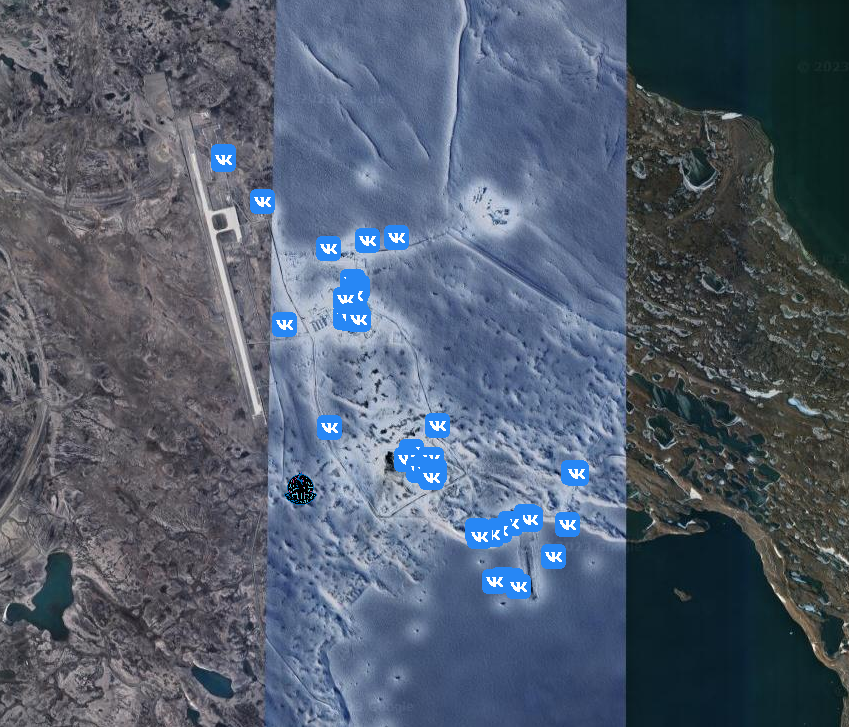

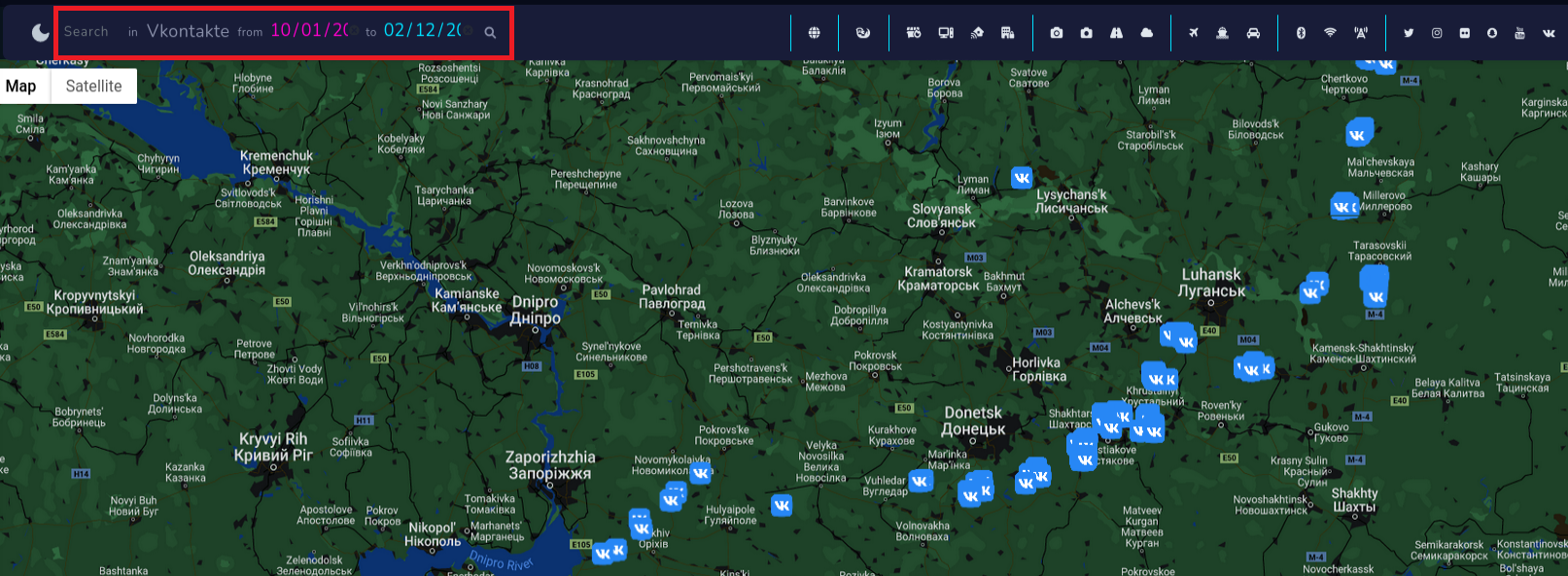

VKontakte tracking

Let's have fun with new module and track military activities of Russian force in Ukraine. It happens a lot often than it should, that sensitive information has been leaked due to lack of cyber awareness of random or even trained people.

On Vkontakte Russian soldiers post photos or selfies revealing timestamp, position and weapons. You can gather ton of information from photos like that and use it to your advantage.

I found these photos near Luhansk, which present accommodation conditions and some broken car, however there are more photos from different periods and different territory.

In addition, we can collect information about the person behind the photo going to his profile

Going deeper, on Vkontakte profile, we can check what brigade he serves (served) in, and cross reference with other type of intelligence to collect even more information about what's going on the ground.

It depends on your imagination what you can do with the tool. Intriguing way is to check photos from VK with Russian Military Installations, training camps and other military objects.

Status-6 on Twitter released locations of Russian military objects and infrastructure

Russian Military Installations v 1.4 - Eastern Military District update:

— Status-6 (@Archer83Able) July 1, 2022

Just completed the final part of my long-term project in Google Earth to map all known Russian military-related sites across Russia and beyond.

DOWNLOAD: https://t.co/A6akI3vcg0

1/4 pic.twitter.com/3N4VJnMpEO

I downloaded the file, displayed it on map and compared VK photos in some of the locations, what lead me to gain knowledge about people in these facilities or equipment.

You can find Russian military installations in very very remote places, but even there, someone uploaded photo with geolocation.

In addition, I remind you can filter findings based on date to get photos from specific period.

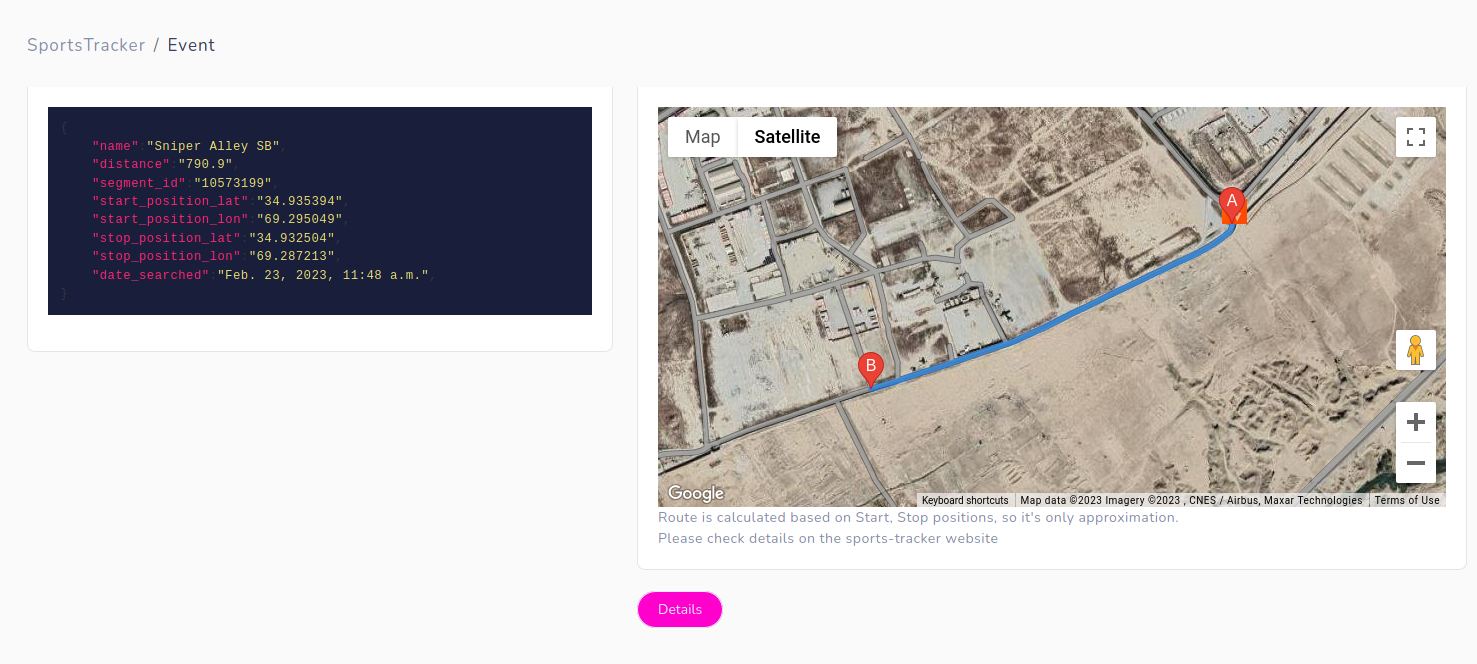

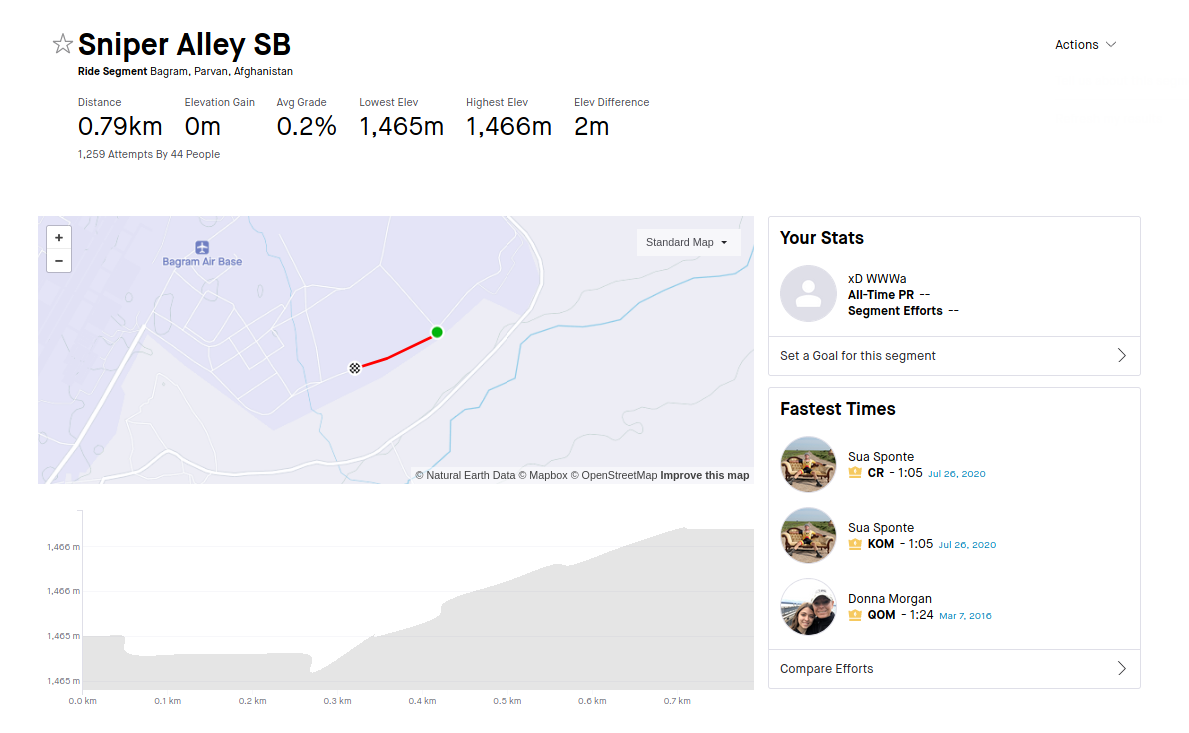

Fitness apps

Another level of espionage is provided by tracking fitness applications, couple years ago Strava and it's heatmap made headlines due to "operational security" of personnel in US military bases.

And now you can do even more detailed research about routes taken across the world. First thing I checked during tests of this module was Bagram Airfield-BAF in Afghanistan.

From obvious reasons there are no new data after August 2021, but you can explore and discover ones that are currently operational.

Moreover, website https://www.sports-tracker.com/ allows to explore routes and photos taken during some physical activities. These photos are very accurate, which is additional photo source.

If we speak about Fitness apps, Alltrails module was used to track former Biden top official exact location.

GET ACCESS ON