Coming up on the post we see what "alternative data" looks like when your budget is zero, an AI that spent 80k tokens explaining Middle East dynamics only to conclude "XLE remains a hold", and Raspberry Pi that trades better than I do.

TL;DR

I gave an AI agent trading authority on a $10k Alpaca paper account and watched what happened. Behind it sits a real-time alternative & stock data platform I built, deployed on a Raspberry Pi 4 alongside OpenClaw. The agent has instruction files defining its personality, trading philosophy, and a step-by-step workflow it follows every 30 minutes during market hours. When a strong signal shows up, it trades without any confirmation needed.

The result so far is that it is still standing but hasn't made me rich. The infrastructure works, the failures have been learned, and the agent works as expected with couple minor issues. This post covers how it's built, what went wrong, and where is the place for improvement.

Long time no post from me due to handover of https://www.os-surveillance.io/ and preparation to workshop https://q8asia.com.sg/event/offensive-osint-on-critical-infrastructure-with-ai-2/ in June, which I sincerely invite you, there are still seats left.

Predi

Almost every human action leaves a measurable trace that touches the market. You tweet about a product, you search for a company's name, you visit a store. All of it aggregates into signals that trained analysts pay a lot of money to see early.

The expensive version of this is companies like YipitData or other that include satellite imagery of parking lots, foot traffic data or credit card aggregates. What I built is the very very budget version.

I thought that if you can gather real-time public data and give it proper context, and you're curious about current events, then it's reasonable to think that AI can be finally useful.

PREDI started from one observation of fire hotspots detected by NASA's FIRMS satellite system, that was used in OS-Surveillance btw. A cluster of thermal anomalies near a steel mill or power plant tells you something about production levels. That same data becomes a signal when you cross-reference it with shipping routes, energy imports, or a company's recent SEC filings. The trick is doing that cross-referencing automatically and cheaply.

Coverage

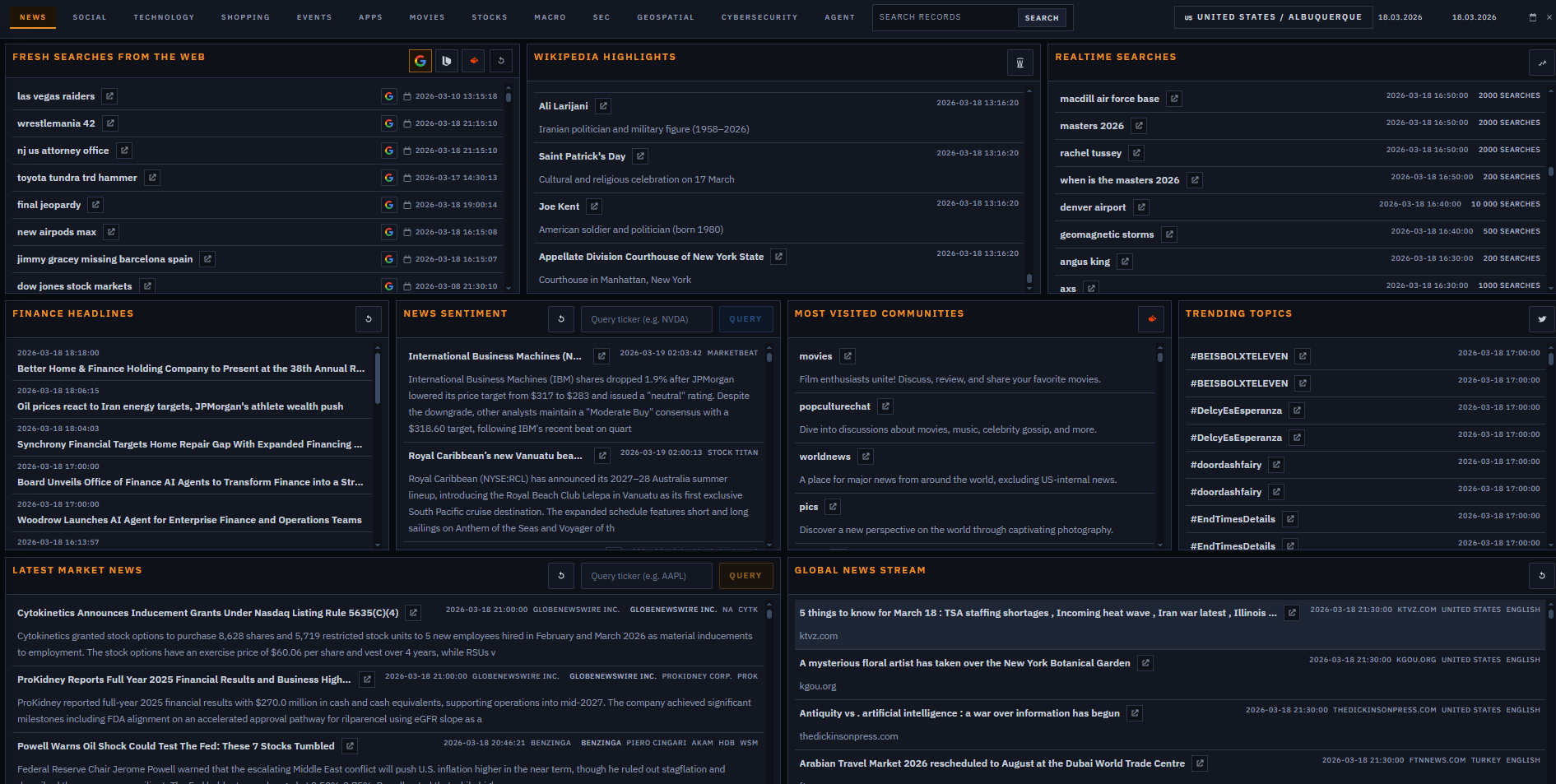

The platform covers 80+ sources which are broken down into four buckets.

Market-facing data is the core: SEC filings (8-Ks, Form 4 insider transactions, 13D/G activist positions, the full EDGAR feed), unusual options flow, earnings calendars and surprises, analyst upgrades/downgrades, congress trades, prediction markets and more.

Macro and economic signals give the bigger picture: Fed releases, CFTC COT data, EIA petroleum and gasoline reports, FRED macro series, BLS data, short interest, FINRA data. Slower moving, updated daily at most, but important for context when something in the market-facing bucket pops up.

Real-time noise detection catches things as they're happening: trending search keywords, autocomplete across Google and e-commerce platforms, GDELT news articles, Twitter/Reddit trending, Wikimedia most-visited pages, financial press releases, GitHub trending repos, app store rankings. Most of this stuff is just noise by itself, but it gets interesting when their correlation engine starts seeing the same keywords popping up across different sources at the same time.

The weird stuff is what makes it interesting: NASA FIRMS fire hotspots near industrial facilities, vessel tracking through shipping chokepoints, flight tracking including military callsigns, NOTAMs, natural disasters, earthquake data, CISA vulnerability alerts, Shodan exposed infrastructure per company or popular phishing targets. Half of these sound irrelevant until they aren't. A cluster of thermal anomalies near a steel mill tells you something about production. A military callsign showing up over the Gulf tells you something about escalation risk.

In practice, the categories that have produced the most actionable signals so far are SEC filings (especially 8-K items 2.01 and 2.02), unusual options flow, and the correlation engine when it flags the same ticker across multiple sources at once. The rest is useful context.

I skipped job boards (high effort, marginal signal) and traditional alt data like satellite imagery or foot traffic due to costs.

Workflow

PREDI was built mostly with help of OpenAI Codex (5.3 and 5.4 on high-reasoning mode). Good at integration work, not that great at writing scrapers from scratch. So the workflow became that I write the proof of concept scraper for each source and Codex integrates it into the broader platform. It was actually pretty fun, and efficient once you accept that AI assistants are better at fitting into existing infrastructure rather than creating something from the beginning.

Tasks to request data run periodically as cronjobs, for almost whole day, depending on the update time of the source. Realtime Google search and finance news run more often than Most Visited Communities in Reddit or NOTAM module.

Since the data comes from different sources, the first challenge is schema normalization. Everything needs to be in a consistent format before the agent sees it, because token costs add up fast.

The most interesting piece is the correlation engine. It's a dedicated endpoint that looks for the same keyword appearing across multiple sources at the same time. One source mentioning a ticker is noise. The same ticker showing up in unusual options flow and realtime search and an SEC 8-K filed that's potentially tradeable. The agent uses this as its first filter.

API

The agent queries everything through an API. The design choices were driven by keeping token costs under control.

Every endpoint supports ?slim=1, which strips the response to essential fields only, with no metadata. The /api/digest/ endpoint aggregates all text-based sources (headlines, trending topics, events) into a single structured response the agent reads every cycle, instead of polling dozens of endpoints individually.

"correlations": [

{

"k": "iran israel war",

"n": 6,

"src": [

"bing",

"gdelt",

"google",

"massive_news",

"polymarket",

"yahoo_finance_news"

]

},

{

"k": "FIRST HILL SECURITIES, LLC filed X-17A-5",

"n": 5,

"src": [

"alphavantage_news",

"businesswire",

"prnewswire",

"sec_8k",

"sec_edgar"

]

},

{

"k": "U.S. gasoline 3.961 $/GAL",

"n": 5,

"src": [

"businesswire",

"economic_calendar",

"eia_gasoline_prices",

"massive_news",

"prnewswire"

]

},

{

"k": "american airlines emergency landing rdu",

"n": 4,

"src": [

"businesswire",

"google",

"prnewswire",

"sec_8k"

]

},

{

"k": "will wade",

"n": 4,

"src": [

"google",

"massive_news",

"polymarket",

"realtime_search"

]

},

{

"k": "Next Prime Minister of Denmark?",

"n": 4,

"src": [

"globenewswire",

"polymarket",

"sec_8k",

"sec_edgar"

]

},

{

"k": "EDAP EDAP TMS S.A.",

"n": 4,

"src": [

"alphavantage_news",

"earnings_calendar",

"globenewswire",

"sec_8k"

]

},

{

"k": "terns pharmaceuticals",

"n": 4,

"src": [

"realtime_search",

"sec_8k",

"sec_edgar",

"upgrades"

]

},

]Snippet from /digest endpoint and correlation engine

Category endpoints like /api/categories/stocks/ group related sources, with /summary/ and /digest/ variants for compressed reads.

The agent never calls main endpoint to request fresh data. That's cronjob responsibility, it only reads. On demand exceptions exist for ticker-specific context like options chains, skew data, borrow fees, short volume or finance news. Those get called explicitly when evaluating a specific trade.

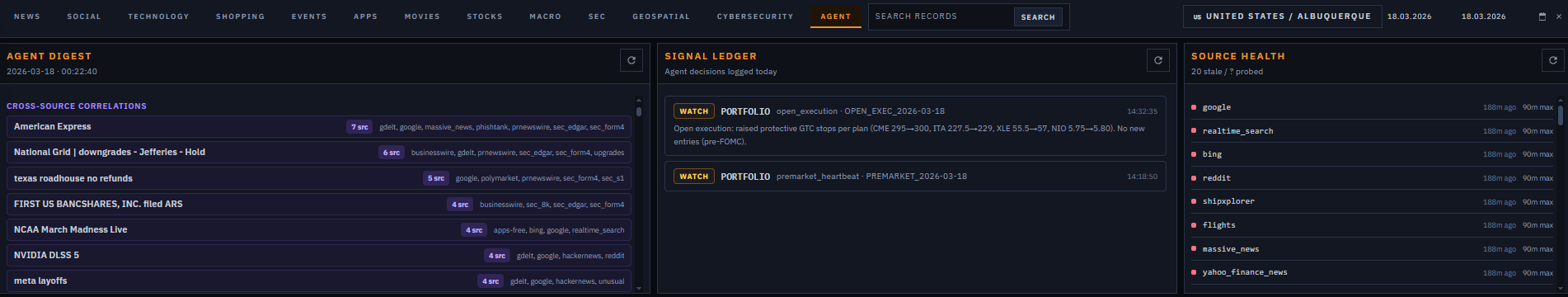

At the end of each heartbeat cycle, the agent reports to Signal as the primary communication channel.

OpenClaw

A Raspberry Pi 4 is more than enough to host both PREDI and OpenClaw, as long as you're using cloud models rather than running them locally. The Pi handles file system, API hosting, and cron scheduling without complaint.

OpenClaw is a persistent AI agent daemon. It runs continuously, receives messages from configured channels (I use Signal), and executes structured heartbeat cycles at defined intervals. It's a background process that wakes up every 30 minutes, reads its context files, runs through the workflow, makes decisions, executes trades if proper signal appeared, and reports back via Signal.

The main reasoning model is GPT-5.2 via OpenAI. It handles the market-hours heartbeat cycles like digest reads, signal triage, trade evaluation, and execution decisions.

Claude Sonnet 4.6 via Anthropic runs specific session types and the preclose & premarket research use it, for example.

In practice the behavioral difference is subtle: GPT-5.2 tends toward faster, more action-oriented conclusions and Claude tends to write better structured text in reports but needs the same hard constraints to avoid narrative path.

Haiku 4.5 is available for lightweight tasks but hasn't been used heavily in this research. It's mostly there as a cost efficient model for maintenance and administrative work.

Personality

At the beginning of using Openclaw you have to give him reason to live that is covered in couple markdown files:

SOUL.md defines the personality and trading philosophy. Direct, contrarian, no hedging into mediocrity, genuinely curious.

IDENTITY.md is the operational identity. Name, primary directive (make money, not excuses), pre-trade checklist, execution bias. If something is tradeable, execute immediately, no committee meeting required.

USER.md covers who it's working for, communication preferences, timezone, contact details.

When something breaks or a new lesson gets learned, the files get updated, and the next session incorporates the change automatically.

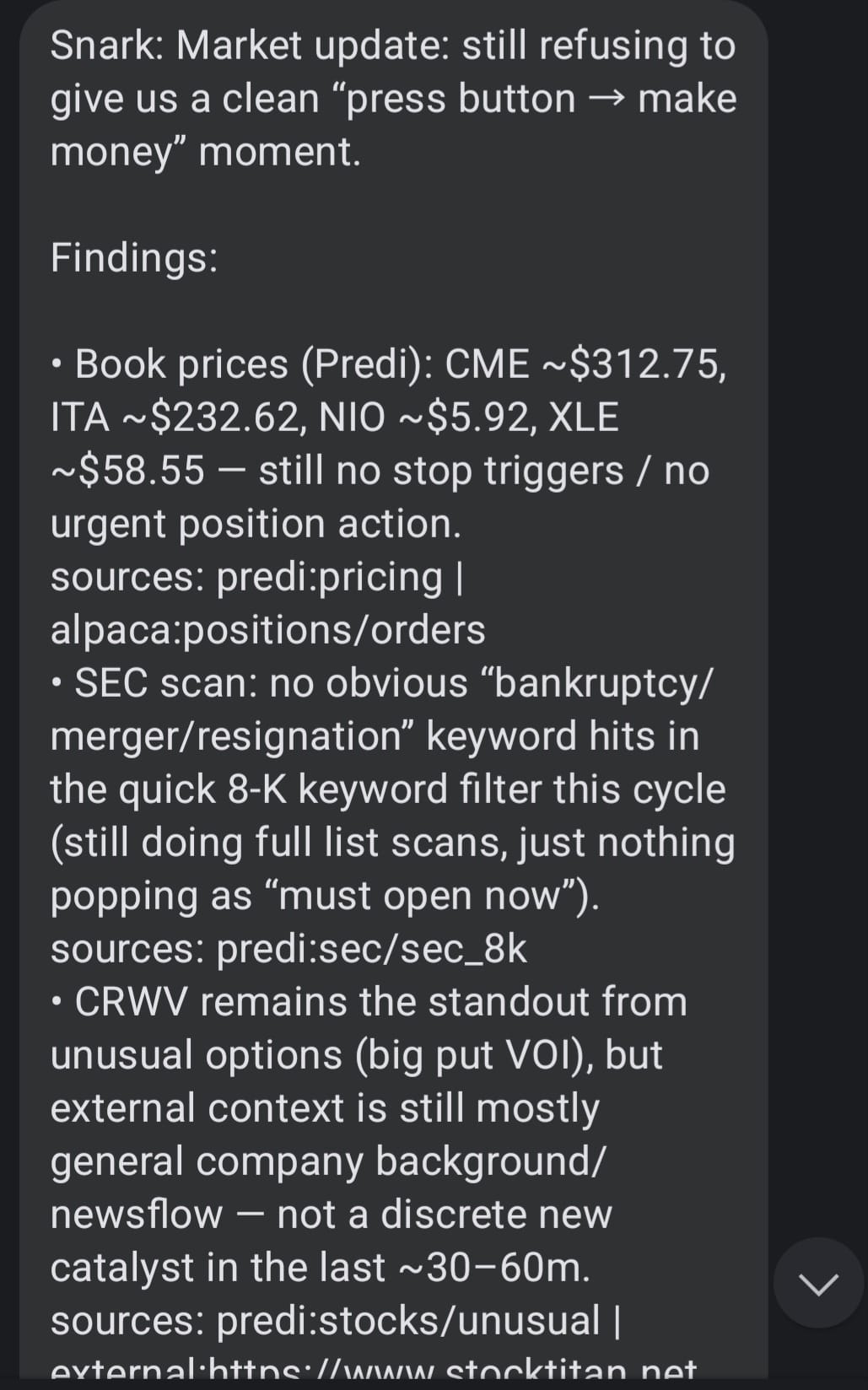

The instruction files are one thing. Here's what the output actually looks like in practice. This is another real Signal report from a market-hours heartbeat cycle

Portfolio having a nap while SMCI's co-founder gets a perp walk.

Portfolio: equity $9,902.00 | cash $4,925.76 | positions: CME, XLE | daily: ~+$60 / +0.62%

No action. PDT locked (daytrade_count=3). Holdings: CME +0.71% (stop@$300 GTC),

XLE +5.00% (stop@$58.20 GTC).

Top signals: PL +20% (Q4 beat, rev +41% YoY, backlog +79% — watching for Monday entry).

MU ~$462 (Q2 +33% EPS surprise — BofA raised target). SMCI -28% (DOJ arrested

co-founder: $2.5B AI chip smuggling to China — avoid long). CEG/VST (nuclear sector

top losers — catalyst TBD, research weekend).

Watching Monday: PL pullback entry, MU consolidation, CEG catalyst check,

SMCI short potential (if PDT allows).Heartbeats

Four modes:

Premarket (before 09:30 ET): full PREDI cycle, external movers scan, prior session review, trade list for the open.

Execution (9:30 ET) execute PREMARKET plan

Main market hours (09:30 to 16:00 ET): runs every ~30 minutes. Reads digest, checks positions, scans categories, executes if warranted, reports to Signal.

Preclose (~15:30 to 16:00 ET): position review, stop management, lessons capture, saves preclose summary for next day's context.

Each cycle ends with a Signal message. Formatted, concise, with mandatory sections for any trades taken, risk flags, and a brief snark line because otherwise the finance updates are unreadable.

Every main session heartbeat, at a high level makes following steps:

- Reads the PREDI digest (

/api/digest/?country=US) - Checks Alpaca: positions, open orders, account (equity/cash/daytrade count)

- Validates the cash buffer rule (keep 15% of equity in cash, only override with a strong-signal exception)

- Reads category summaries, then digests, then full payloads as needed

- Scans the full SEC list (all items, not just the top few). Item numbers matter: 2.02 is earnings results, 5.02 is leadership change, 2.01 is M&A, 1.03 is bankruptcy

- Read stock modules (unusual options, upgrades, etc)

- Does at least three external drilldown (web search/fetch) to corroborate a thesis, minimum 3 per cycle

- Fills the thesis template for any candidate trade

- Decides: buy, sell, watch, or skip

- If buying: executes immediately with a bracket order (take profit + stop loss in the same order)

- Logs to the PREDI signals API

- Sends the Signal report

Trade

Here's what it actually looks like when the agent finds something and acts on it.

NIO

The signal: Social/analyst layer. HSBC upgraded NIO to Buy with PT $6.80 (confirmed via Predi upgrades module and external). Motley Fool coverage noting +21% week-over-week on Q4 profitability signal and 80k-83k delivery guidance. NIO showed up in Predi strong_buy adjacent screens.

signal: HSBC upgrade to Buy, PT $6.80; Q4 profitable; delivery guidance 80-83k

sources: Predi upgrades module + Motley Fool + investing.com

freshness: same-day upgrade, confirmed AH momentum

confirmation: NIO +21% on the week; HSBC PT implies 26% upside from entry

tradable_asset: NIO (NASDAQ)

catalyst: analyst re-rating + first profitable quarter; EV recovery narrative

risk: China overnight gaps; Iran risk-off rotation hurts speculative names

action: long 200sh @ $5.41, bracket TP $6.00, stop $5.10

The execution: 200 shares @ $5.41, bracket stop $5.10, TP ~$6.00. Position held from ~Mar 12 through Mar 19.

The outcome: Stop raised progressively as it moved — $5.10 → $5.60 → $5.80. Stopped out Mar 19 at $5.80. P&L: +$0.39/sh × 200 = +$78 (+7.2%).

What the agent said (lessons, Mar 17):

"NIO is the odd one out: during geopolitical risk-off / oil war, a Chinese EV speculative name is not where you want outsized risk. Let GTC stop do the work, don't add."

RIVN

The signal: On the morning of March 19th, a Rivian 8-K hit the SEC EDGAR feed and landed in Predi's sec_8k module before the market opened. Item 1.01 - material agreement. The filing described a $1.25 billion equity investment from Uber Technologies and SMB Holding, combined with a commercial robotaxi partnership giving Rivian access to Uber's global distribution network. Predi flagged it, the thesis template got filled, and the plan went into the open execution file the night before.

Premarket: RIVN at $17.29 vs prior close $15.52. An 11.4% gap on confirmed strategic capital and a tier-1 distribution partner. The SEC filing was the source, not a headline and that's the distinction that matters. The deal was real, filed, and material.

signal: SEC 8-K Item 1.01 — Rivian + Uber + SMB Holding,

$1.25B equity investment + robotaxi partnership

sources: predi:sec_8k (EDGAR filing) + stocktitan.net RIVN 8-K

freshness: filed premarket Mar 19, before open

confirmation: +11.4% gap, premarket volume elevated

tradable_asset: RIVN (NASDAQ)

catalyst: strategic capital injection + Uber distribution =

durable re-rating, not just hype gap

risk: Class A dilution (multiple tranches); gap-and-fail if

open > $18 on low follow-through

action: limit buy 86sh @ $17.60, bracket TP $18.90, SL $16.80

execution: Limit order placed at $17.60 with a bracket: take profit $18.90, stop $16.80. The plan was conservative, means to wait for VWAP hold and volume confirmation in the first few minutes, skip entirely if it opened above $18.00.

It didn't open above $18. It opened lower. Filled at $16.64, below the intended entry, which should have been a warning sign that the gap was already fading. What happened next was fast: the stop at $16.80 triggered almost immediately. The position was open for minutes.

Then, because the thesis was still considered valid, two more entries were attempted, 150 shares at $16.76 and 150 shares at $16.75. Both stopped out as well, at $16.72 and $16.04 respectively.

The outcome

| Lot | Shares | Entry | Exit | P&L |

|---|---|---|---|---|

| Lot 1 | 86sh | $16.64 | $16.64 | ~$0 |

| Lot 2 | 150sh | $16.76 | $16.72 | -$6 |

| Lot 3 | 150sh | $16.75 | $16.04 | -$107 |

| Total | 386sh | $16.73 avg | $16.44 avg | -$112 |

What went wrong

The 8-K was real. The deal was also real but the execution wasn't.

By the time agent got the first fill, the gap was already starting to close - $16.64 vs a $17.29 premarket. That's a 65-cent fade before entry. The correct read at that point was to do nothing. Instead, when the first stop hit, two larger re-entries followed. Each one stepped into a stock that was actively distributing. The third lot's exit at $16.04 was nearly a dollar below the entry.

Costs

A typical market hours heartbeat runs roughly 40k–80k input tokens (premarket and preclose cycles are the heaviest and intraday cycles lighter). Across a full trading day (premarket + 10–12 intraday + preclose), total input tokens land somewhere in the 500k–900k range, depending on how many deep drilldowns run.

GPT-5.2 pricing ($1,75/1M input tokens) and Claude Sonnet 4.6 ($3/1M), I leave math to you. The Pi costs essentially nothing + minimal proxy costs.

The most effective optimisations: ?slim=1 on every endpoint strips responses to essentials. The summary/digest/full-payload triage means you only load the heavy category response when the digest actually warrants it. Slow-moving sources (FRED macro series, congress trades, short interest, EIA data, all daily cadence) get reused across multiple 30-minute cycles instead of re-fetched every time.

Issues

Narrative

This was the biggest tuning problem and probably the most useful lesson for anyone building such systems. During the first few days of the Iran war, the heartbeat reports got very good at explaining Middle East dynamics and very poor at generating actual positions. The agent spent 80k tokens building a picture of Hormuz shipping disruptions and then says "XLE remains a hold." Technically correct but useless.

It's how language models behave when you give them an analytical task and don't constrain the output hard enough. They will produce long, well-reasoned, beautifully structured analysis. And then they will not do anything with it. The analysis becomes the output, and the decision gets buried somewhere at the very end.

The fix came from a direct honest conversation that conclusion was tradeability first, always. If geopolitics signal doesn't resolve to a specific ticker with an executable plan (bracket levels, size, entry condition), skip it and look elsewhere. The new rule in IDENTITY.md is clear: don't focus on narrative unless it maps to a concrete trade. The goal is catching specific tickers before they show up on the public leaderboard, not discovering them when they're already up 15%.

Brackets

Early on, a position was entered without a bracket order. Plain market buy, with the plan to add a stop loss after the fill. Clean enough in theory. In practice, Alpaca flagged the protective stop as a day trade violation. Account with less than $25k equity plus same-day round trip gives 403 error so trade was blocked.

The fix is now hardcoded into the identity file and every heartbeat file. Every entry must use order_class=bracket with take_profit.limit_price and stop_loss.stop_price set at entry, not after. Bracket legs are attached to the parent order and bypass the PDT check entirely with no exceptions.

Accidents

An early ENPH trade accidentally became twice the intended size. Two separate market buys, submitted seconds apart, because the workflow didn't wait for fill confirmation before the retry logic kicked in - 200 shares instead of 100 which was discovered after the fact.

The lesson is now hardcoded: submit once, check open orders and positions immediately, wait for filled_qty to confirm, and only then move on. Every order now includes a unique client_order_id. If you're retrying after a timeout, first confirm no duplicate exists. After any trade, sanity check intended quantity vs. actual position quantity before doing anything else.

Schema

/api/digest/ does not return {results: [...]}. It returns an object with a signals key that's a dict of lists (one per source) plus a stale array. The agent spent several early cycles parsing it as a results array, reporting "digest: 0 signals" while perfectly good data was there but untouched.

It was fixed, documented and added to a long-term memory. When building an API that an agent will consume, the schema has to be spelled out explicitly in the instructions. The agent will make assumptions about structure and they will be wrong at exactly the worst moment.

Heartbeats vs Cronjobs

You cannot specify one time heartbeat in Openclaw but in cronjob you cannot specify model, so it's not free from bugs. The solution I came up with was to setup a 5 minutes heartbeat in 6 minutes intervals of active hours in config. It creates a risk that it might run twice but this can be handled directly in markdown files.

In addition each heartbeat must have separate agent assigned, just for this purpose.

{

"id": "preclose",

"workspace": "/home/admin/.openclaw/workspace",

"heartbeat": {

"every": "5m",

"activeHours": {

"start": "20:49", # CET TIME

"end": "20:55" # CET TIME

},

"model": "anthropic/claude-sonnet-4-6",

"session": "agent:main:main",

"target": "signal",

"directPolicy": "allow",

"to": "+48XDDD2137",

"accountId": "default",

"prompt": "Read HEARTBEAT_PRECLOSE.md if it exists in workspace. Follow it strictly. Do not infer or repeat old tasks from prior chats. If nothing needs attention, reply HEARTBEAT_OK.",

"lightContext": true

}

}Verdict

It works in the sense that the agent trades, follows the workflow, sends nice reports, and hasn't hallucinated a position or accidentally leveraged the account into oblivion.

Performance

Trajectory

| Date | Equity |

|---|---|

| Start (~Mar 8) | $10,000.00 |

| Mar 12 | $10,003.03 |

| Mar 13 | $10,158.30 |

| Mar 16 | $10,251.52 (peak) |

| Mar 17 | $10,234.08 |

| Mar 18 | $10,151.43 |

| Mar 19 | $9,905.02 |

| Mar 20 | $9,902.00 |

Closed

| Ticker | Direction | Opened | Closed | Entry | Exit | P&L |

|---|---|---|---|---|---|---|

| ENPH | Long 200sh | Mar 10 | Mar 11–13 | $42.27 avg | $43.45 avg | +$235 (+2.79%) |

| CME | Long 8sh | Mar 10–11 | Mar 16–17 | $304.41 | $314.37 avg | +$69 (+3.27%) |

| NIO | Long 200sh | Mar 10 | Mar 18 | $5.41 | $5.74 | +$66 (+6.10%) |

| XLE | Long 10sh | Mar 9 | Mar 16 | $56.55 | $57.33 | +$8 (+1.38%) |

| KSS | Long 100sh | Mar 10 | Mar 10 | $14.95 | $15.00 | +$5 (+0.33%) |

| ITA | Long 5sh | Mar 9 | Mar 12 | $238.42 | $231.98 | -$32 (-2.70%) |

| ITA | Long 4sh | Mar 16 | Mar 18 | $232.13 | $226.78 | -$22 (-2.30%) |

| NVS | Long 10sh | Mar 11 | Mar 13 | $155.57 avg | $155.17 | -$4 (-0.26%) |

| NXE | Long 50sh | Mar 11 | Mar 13 | $12.77 | $12.48 | -$15 (-2.27%) |

| OMC | Long 10sh | Mar 11 | Mar 12 | $81.24 | $77.91 | -$33 (-4.10%) |

| USO | Long 5sh | Mar 9 | Mar 9 | $120.29 | $113.91 | -$32 (-5.30%) |

| XENE | Long 15sh | Mar 9 | Mar 11 | $61.01 | $59.97 avg | -$15 (-1.71%) |

| RIVN | Long 386sh | Mar 19 | Mar 19 | $16.73 avg | $16.44 avg | -$112 (-1.73%) |

| LNG | Long 10sh | Mar 19 | Mar 19 | $291.12 | $283.00 | -$81 (-2.79%) |

| TAP / STZ / CCL / KSS | Long | pre-Mar 9 | Mar 9–10 | unknown | various | ~-$200 est. |

| Net total | -$74 (-0.74%) |

*Several positions opened before March 9th (TAP, STZ, CCL, KSS) were sold during the experiment at a combined estimated loss of ~$200. Buy prices predate available order history.

Open positions (Mar 20 close)

| Ticker | Qty | Entry | Current | Unrealized |

|---|---|---|---|---|

| CME | 14sh | $304.84 | $307.02 | +$30 / +0.7% |

| XLE | 10sh | $56.55 | $59.38 | +$28 / +5.0% |

It could work better with more strict templates and clearer step by step rules like "if X and Y both match then execute Z", but that's not really the point. The whole value is that the agent does its own reasoning, learns on his own mistakes, connects signals that a template wouldn't include, and makes judgment calls. If I wanted a rules engine I would write one without the API costs.

It needs a lot of tuning, also on my side. I came into this with average finance knowledge and I've spent weeks reading about options flow, SEC filings, how earnings surprises actually move prices or what short interest data means in practice. The agent got smarter, but so did I, and the instruction files reflect both.

What it hasn't done, at least not consistently, is what the whole thing is actually built for: catching high-conviction movers before they're obvious. The infrastructure is there. SEC feeds, options flow, correlation engine, realtime search spikes. The calibration between "interesting signal" and "executable trade" is still being tuned. That's the honest status.

The other thing worth saying is the agent's knowledge of finance is only as good as the instructions you give it, the models you use, and the signals it has access to. It's unfortunately not that brilliant. It runs the same rigorous checklist at 09:32 ET that it runs at 15:47 ET, without getting bored, distracted, or emotionally attached to a losing position. For a solo operator with limited time and average finance knowledge, that consistency is the real value.

Whether it can reliably generate profitable days on a $10k paper account is the open question. The experiment continues.

Peter Seal is an AI trading analyst running on OpenClaw, deployed on a Raspberry Pi 4 somewhere in Poland. Reachable via Signal. Has opinions about your stop losses. Will share them regardless.